Keywords

Airborne, Imagery, Point-cloud, Feature matching, Uncertainty estimation, Deep neural networks

Introduction

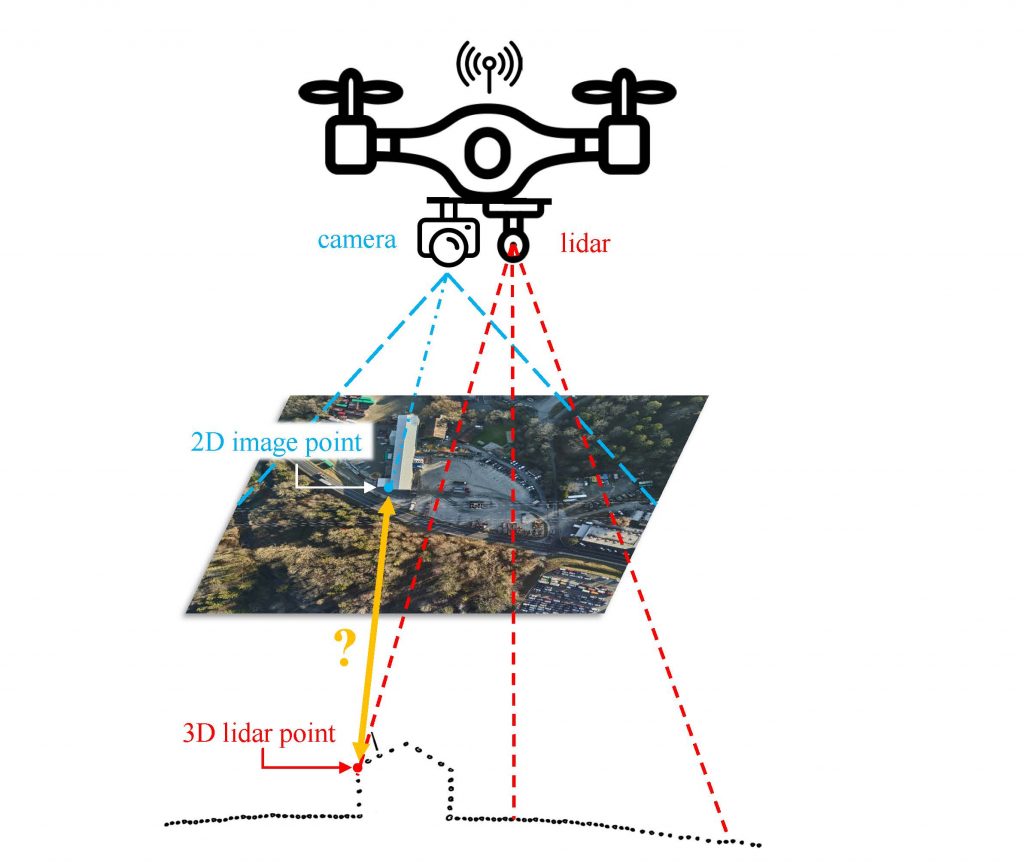

The optimal integration of simultaneously acquired datasets of the 2D domain (imagery) and the 3D domain (lidar point-cloud) is an open issue that highly concerns the scientific community [1]. It has multiple applications in infrastructure monitoring, archaeology, agriculture and forestry, since it provides models for 3D reconstruction, mapping, augmented reality, etc. The registration of camera and lidar measurements is a direct task when geometric constraints are considered. However, in the absence of system calibrations, the fusion of the two optical datasets becomes uncertain and fails to meet the user expectations in certain cases.

Latest works in the field of Deep Learning have managed to establish a spatial relationship between the 2D and the 3D domain, based on feature matching. A feature is used to describe a 2D pixel or a 3D point. It corresponds to a vector where the numbers and their sequence contain information about the local neighborhood of the pixel/point. This local information is extracted using a 2D image patch or a 3D point-cloud volume centered on the pixel/point of interest. The whole feature extraction pipeline consists of the following main steps: detection, description, matching and outlier rejection of local features. Feature matching or homologous feature extraction is achieved by computing the distances between features of candidate pixels/points.

The existing “in-house” algorithm leverages upon the LCD [2] architecture to extract corresponding 2D – 3D features. New weights have been extracted via re-training with airborne datasets [3], and the dependence on a colorized point-cloud has been evaluated in some preliminary studies. Our developed feature matching and filtering pipeline can become even more revealing when followed by an uncertainty estimation, texture dependence analysis or viewpoint invariance investigation.

Objectives

- Introduction to the problem.

- Estimation of matching uncertainty.

- Texture investigation, ie examines what types of terrain (build areas, high-vegetation, else) favor successful matching of 2D and 3D features.

- Patch sizes investigation, ie examines how the size of the 2D and the 3D patches affects the matching performance.

- Viewpoint invariance investigation.

Task description

- Familiarize with relevant literature and existing “in-house” algorithms.

- Quantify the matching uncertainty using e.g. Bayesian statistics [4].

- Investigate how the texture of the 2D-3D correspondences affect the matching uncertainty.

- Investigate how the patch size affects the matching uncertainty.

- Use Ensemble learning to enforce viewpoint invariance.

Preamble: The exact definition of the work will depend on the nature of the project (semester/PDM/other).

Prerequisites

Python programming (pytorch will be counted as an extra), Image processing, familiarity with Point-cloud processing, familiarity with Neural Network architectures

Contact

Interested candidates are kindly asked to send us by email their CV and a short motivation statement.

Kyriaki Mouzakidou , Laurent Valentin Jospin, Jan Skaloud

References

[1] Mouzakidou, K. et al., 2023. Airborne sensor fusion: expected accuracy and behavior of a concurrent adjustment. ISPRS Open Journal of Photogrammetry and Remote Sensing. Under Review.

[2] Pham, Q.-H., et al., 2020. LCD: Learned cross-domain descriptors for 2D-3D matching, AAAI Conference on Artificial Intelligence.

[3] Vallet, J., et al., 2020. Airborne and mobile LiDAR, which sensors for which application? ISPRS – International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, XLIII-B1-2020, 397–405.

[4] LV Jospin, et.al., 2022. Hands-On Bayesian Neural Networks—A Tutorial for Deep Learning Users, in IEEE Computational Intelligence Magazine, vol. 17, no. 2, pp. 29-48, May 2022, doi: 10.1109/MCI.2022.3155327.