Pose determination (determination of the position and orientation of the camera) with respect to well-known landmarks or beacon-points is a problem that takes its sources in the late XIXe century. The state-of-the-art method consists in recognising similar objects (at least three) both in the image and on the terrain with known coordinates. However, this task is not automated, and even difficult for a human operator.

The objective of this research-line is to develop algorithms that can determine the camera pose parameters (location and attitude) using only an RGB camera stream (single image or multiple consecutive shots) as an input. The Methodology explores approaches in which the Deep Learning algorithms are trained initially on synthetic data (using high accuracy 3D geospatial models and engines such as Cesium [1] ). Once trained on the synthetic data transfer learning methodologies (Sim2Real) approaches are applied in order to enable the networks to operate and estimate the camera pose using real RGB images that might vary (seasons, time of day) from the synthetic data sets.

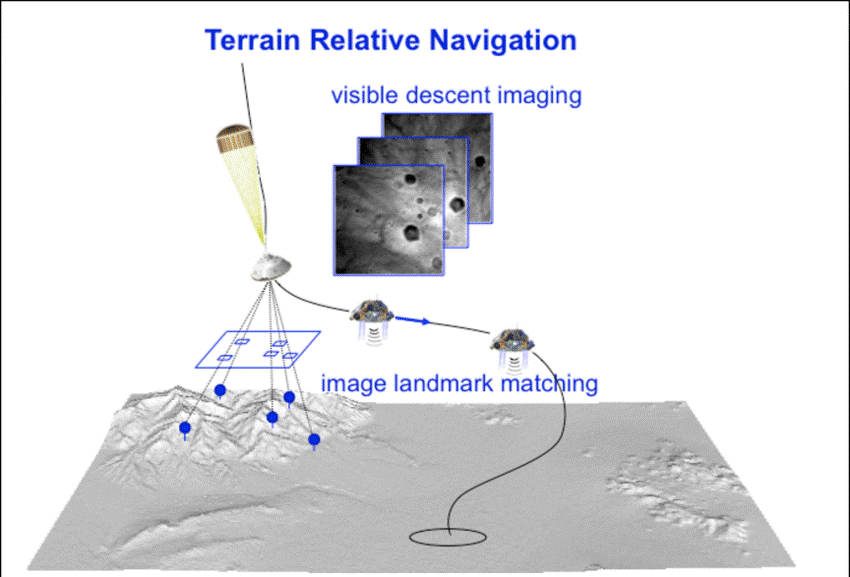

Such an algorithm could lead to several applications such as adjusting the trajectory of spatial probes landing on Mars [3], Figure 1,

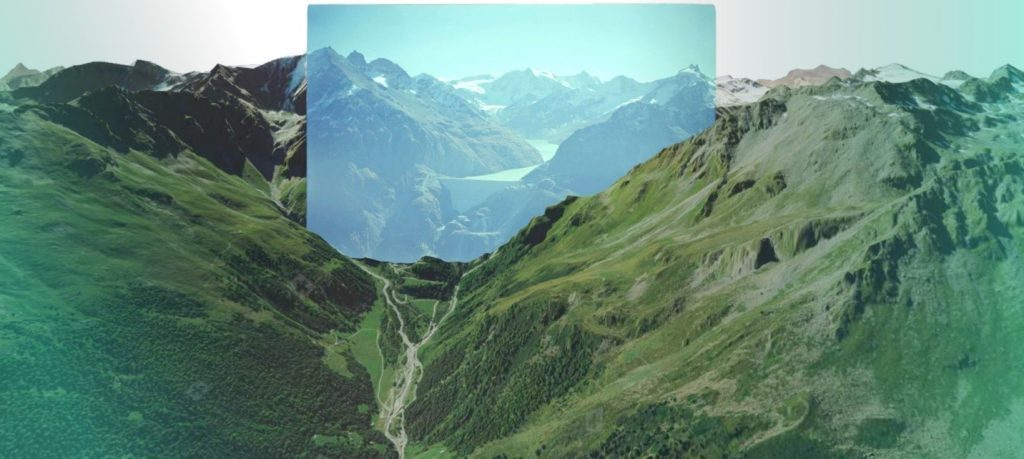

Autonomous (GNSS free) Navigation of Autonomous aircrafts and drones [2] [4] and georeferencing historical photographs in order to detect environmental changes [5], Figure 2.

Student research work at TOPO has achieved single shot navigation accuracy of close to 10 meters on synthetically generated images in the Swiss Alps. Results matching or beating the accuracy of GNSS navigation receivers used in drones.

Current research goals and interest are in studding various transfer learning schemes and techniques (Sim2Real) that can help achieve the synthetic data accuracy on any real RGB camera image stream input.

Remaining research questions to be investigated are how to improve the methodology by leveraging on additional temporal information (video stream) and alternative sensor data (IMU, barometer, magnetometer, last known position). Exiting possibility is the fusion of this visual absolute navigation technology together with the dynamic based navigation technology developed by TOPO thus allowing a completely GPS/GNSS free capable drone and autonomous aircraft outdoors navigation solution.

Resources to be provided to the student:

- High accuracy 3D digital terrain and texture models of Switzerland

- Existing pipelines for synthetic data generation, raytracing.

- High-quality DTM and aligned areal historical photos that can be used for independent algorithms test and validation [3] https://smapshot.heig-vd.ch/map/?imageId=4336

- Drone flights videos with high precision ground truth of position and orientation

Outcome:

Chance to collaborate with other Master and semester students working on exciting machine learning and drone navigation project. Concentrating either on:

- Synthetic data generation schemes and techniques

- Novel algorithms development for navigation

- Transfer learning techniques Simulation to reality

The recommended type of project:

Semester, group or Master projects

Work breakdown:

30% theory, 60% development, 10% experiments (negotiable depending on particular student project).

Prerequisites:

Prior exposure and interests in Deep Learning and Python language. Desire to learn quickly and advance personal skills in the area. Independent and adventurous mindset with curiosity toward navigation, drones and mapping.

Contact:

References

[1] “Cesium – Changing how the world views 3D.” [Online]. Available: https://cesium.com/. [Accessed: 09-Sep-2020].

[2] “Daedalean / Home.” [Online]. Available: https://daedalean.ai/. [Accessed: 09-Sep-2020].

[3] J. Mustard et al., “Report of the Mars 2020 science definition team,” 2013.

[4] A. Suleiman, Z. Zhang, L. Carlone, S. Karaman, and V. Sze, “Navion: A 2mW Fully Integrated Real-Time Visual-Inertial Odometry Accelerator for Autonomous Navigation of Nano Drones.”

[5] T. Produit, N. Blanc, S. Composto, J. Ingensand, et al. “Crowdsourcing the georeferencing of historical pictures” Proceedings of the Free and Open Source Software for Geospatial (FOSS4G) Conference https://smapshot.heig-vd.ch/map/?imageId=4336

[6] T. Campbell, R. Furfaro, R. Linares, and D. Gaylor, “A deep learning approach for optical autonomous planetary relative terrain navigation.”