Project Description

Our contribution

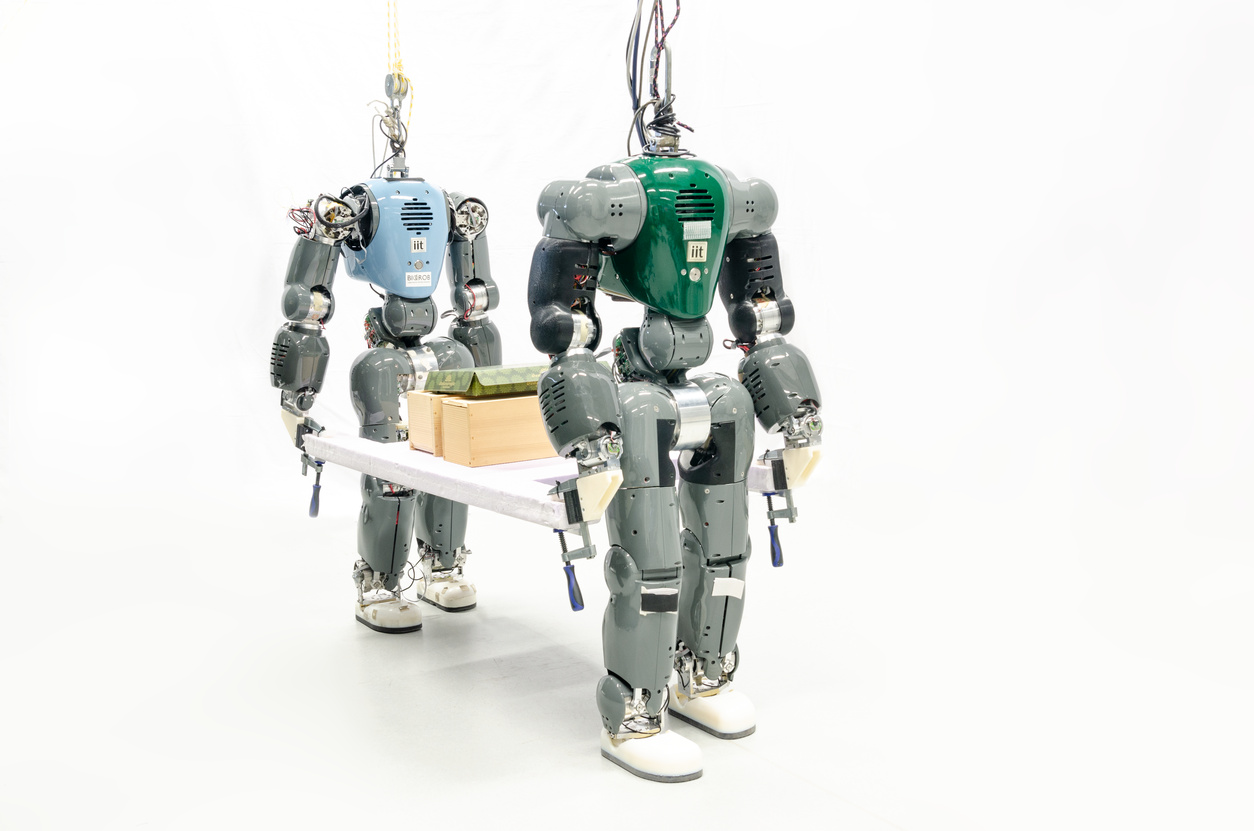

In the context of the CogIMon project, Biorobotics Laboratory is in charge of (1) analyzing and modeling human-human gait adaptation while mechanically paired (e.g. while carrying an object), (2) exploiting human strategies in order to implement a whole body control algorithm for a humanoid robot while interacting with a human and (3) extending the control algorithm for a pair of humanoid robots in the collaborative task of carrying an object. The COMAN robot which is used in this project is a 31-DOF humanoid robot, which is 95 cm tall and weighs 31kg.

1) Human-Human interactive locomotion (link): In spite of extensive studies on human walking, less research has been conducted on human walking gait adaptation during interaction with another human. In this paper, we study a particular case of interactive locomotion where two humans carry a rigid object together. Experimental data from two persons walking together, one in front of the other, while carrying a stretcher-like object is presented, and the adaptation of their walking gaits and coordination of the foot-fall patterns are analyzed. It is observed that in more than 70% of the experiments the subjects synchronize their walking gaits; it is shown that these walking gaits can be associated to quadrupedal gaits. Moreover, in order to understand the extent by which the passive dynamics can explain this synchronization behaviour, a simple 2D model, made of two-coupled spring-loaded inverted pendulums, is developed, and a comparison between the experiments and simulations with this model is presented, showing that with this simple model we are able to reproduce some aspects of human walking behaviour when paired with another human.

2) Human-Humanoid robot interaction while walking (link): In many physical human-robot interaction scenarios, for successful completion of the tasks, robots should be able to recognize the human partner’s intention. One of such scenarios that is studied in this paper is the collaborative task of carrying an object by a human-humanoid pair in which the humanoid should be able to interpret specific human partner’s intentions (e.g., start/stop-walking, accelerate, etc.) only through haptic feedback. To address this problem, we first performed human-human experiments and obtained a multiclass classifier (with more than 90\% of accuracy) for human intention detection using, as features, arm position relative to the shoulder and interaction forces. The results of the multiclass classification, without any modifications, have been used to develop an interlimb coordinator that was integrated in a modular control architecture into human-robot experiments. The interlimb coordinator receives the sensory data of the upper-body and sends appropriate commands (including, start/stop-walking, accelerate, decelerate commands) to the lower-body controller which is responsible for achieving a stable walking gait. This modular control approach is successfully tested in the human-humanoid experiments with the COMAN robot.

3) Humanoid-Humanoid robots carrying objects: A control framework for two child size humanoid robots carrying objects with no negligible weight has been developed.

The controller is modular in order to allow reusability and a quick implementation. More so, the controller is decentralized such that each humanoid robot is controlled separately with respect to the other with whom it communicate only through interaction forces. This allows the possibility to easily extend the work for more than two robots.