Imagine a haptic device so thin and soft that when it is stuck on your finger, you simply can’t feel it is there… until it is turned on, when it then allows you to feel rich haptic sensations, enabling a sense of touch in Virtual Reality (VR), Augmented Reality (AR) and Mixed Reality.

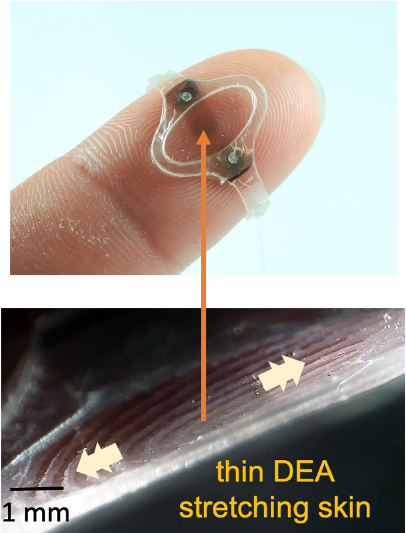

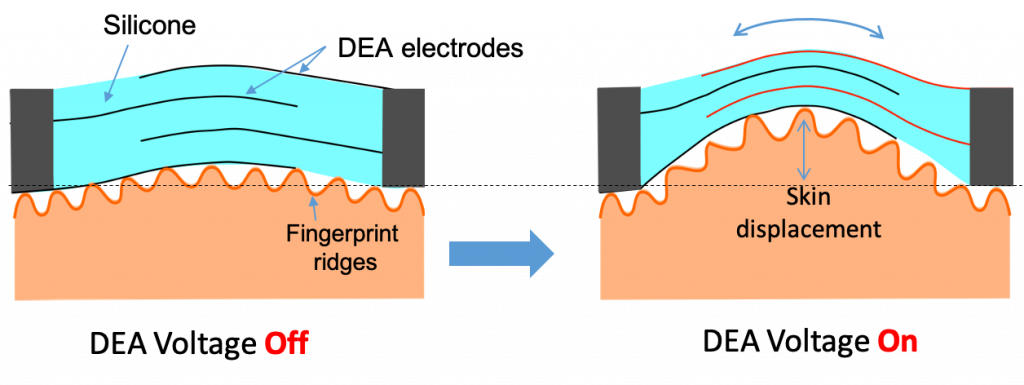

We have developed an ultra thin (only 18 µm thick) Dielectric Elastomer Actuators (DEAs) that enable “feel-though” haptics. The DEAs, a type of electrically-drive artificial muscle, allow locally gently stretching the skin, given the feeling of a gentle tap or of buzzing, depending on whether it is actuated at low frequency (eg a few Hz) or at 100s of Hz. We used in the past similar DEAs for autonomous insect robots.

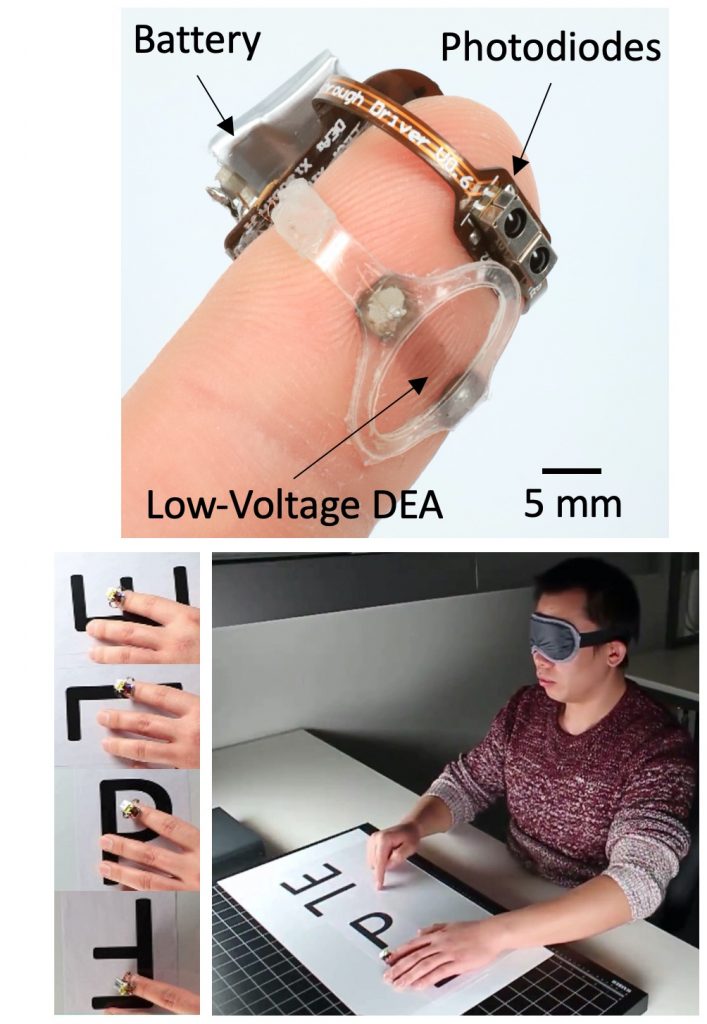

The device can be built into a glove for haptics, giving the illusion that virtual objects to feel real. We made an untethered version weighing only 1.3 grams allowed blindfolded users to correctly identify letters by “seeing” them through their finger, using a photodiode to image in front of the finger. In this example, the users scan their finger on a smooth plastic sheet under which printed letters have been randomly positioned and oriented. The feel-through DEA is programmed to vibrate when over black ink, and to be still when over white paper.

The same concept could enable the wearer to use their fingertip to hear, sense distance or gas concentration by replacing the photodiodes with different types of sensors (e.g. microphone, ultrasound distance sensor, gas sensor). Our Feel-Through DEA device could enable users to use their sense of touch as augmented perception to perceive sound, 3D space and smell.

Acknowledgments. This work was a collaboration between EPFL-LMTS, EPFL-LAI, and UC-LPPI.

All details in the following publication: Xiaobin Ji, X. Liu, V. Cacucciolo, Y. Civet, A. E. Haitami, S. Cantin, Y. Perriard, H. Shea, “Untethered Feel-Through Haptics Using 18-µm Thick Dielectric Elastomer Actuators.” Advanced Functional Materials. 2006639 (2020). doi: 10.1002/adfm.202006639

This work received funding from the Swiss State Secretariat for Education, Research, and Innovation in relation to the Marie Skłodowska-Curie grant agreement No 641822-MICACT, and by the Hasler Foundation Cyber-Human Systems program.