Mobile robots crash testing with pedestrians – child and adult –

This dataset contains injury measures during collisions between a mobile service robot – Qolo – and pedestrian dummies: male adult Hybrid-III (H3) and child model 3-years-old (Q3). We present multiple collision scenarios for the assessment of pedestrian safety, considering possible impacts at the legs for adult pedestrians, and legs, chest and head for children. The dataset is available here DOI:10.5281/zenodo.5266447, and the data processing repository on GitHub. Partially funded by the EU CrowdBot project.

3D point cloud and RGBD of pedestrians in robot crowd navigation

This dataset contains over 250k frames of robot navigation in raw crowds in the city of Lausanne, Switzerland with the personal mobility robot Qolo in semi-autonomous navigation. It includes egocentric sets of frontal and rear 3D point clouds from Velodyne VLP-16 and labelled RGBD videos. The dataset is available here DOI:10.21227/ak77-d722, and data processing repository on GitHub. Funded by the EU CrowdBot project.

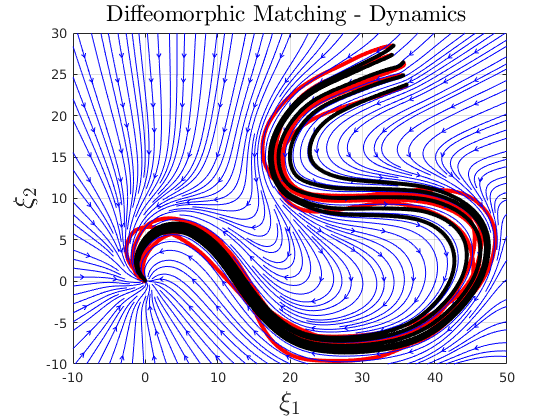

Handwriting human motion dataset

This dataset contains 2-dimensional trajectories (position and velocities) of handwritten letters of the alphabet. These have been used extensively to compare modeling of complex trajectories with dynamical systems. The dataset is available here.

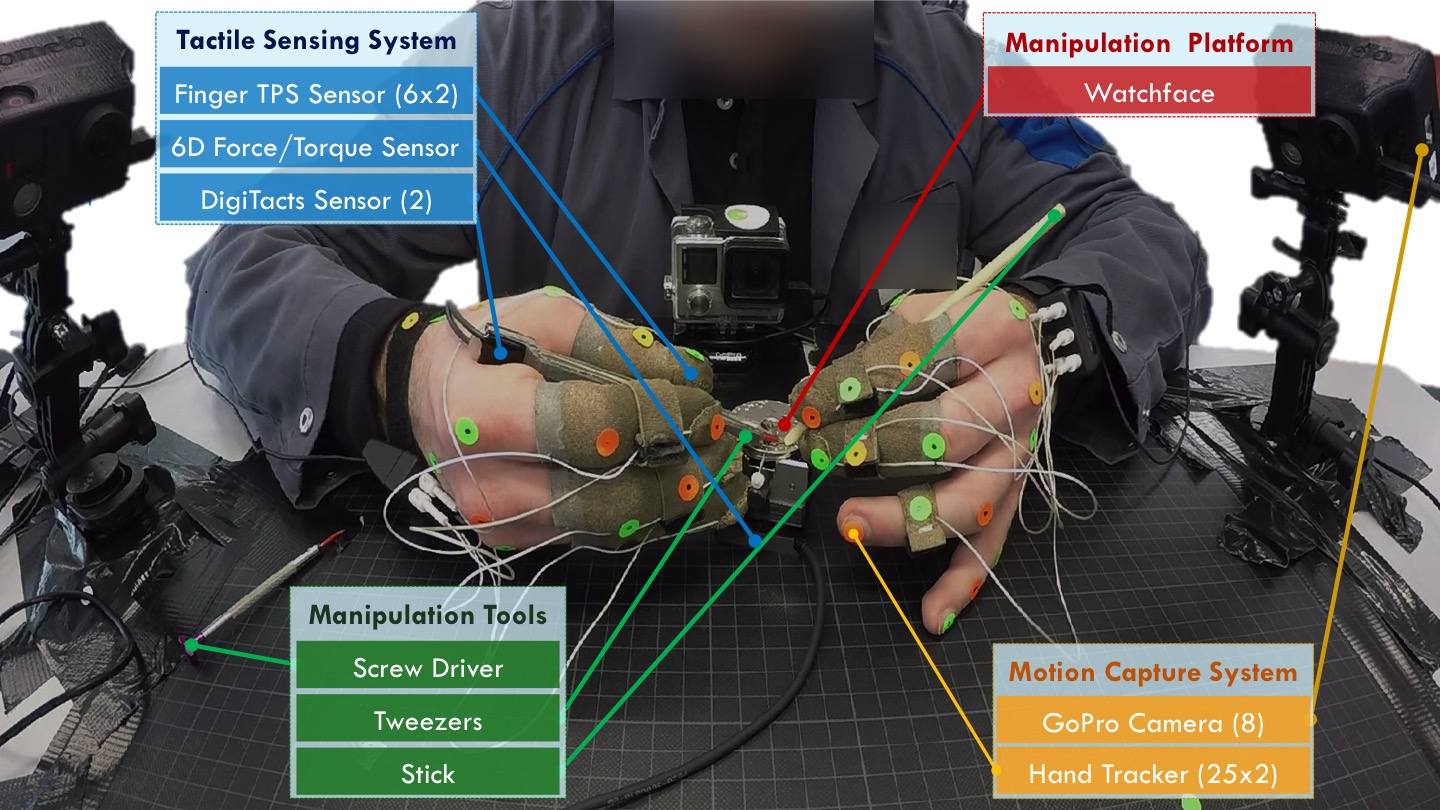

Haptic and motion dataset on human fine manipulation

The dataset contains a set of highly accurate measurements of force, haptic and motion data applied by watchmakers when performing very fine insertion and screwing as required to build watches. The data consists of force/torque measurements on the watch base, pressure along the fingers, kinematic of fingers and tools motion. The dataset is available on GitHub and Zenodo (sensor recording and motion segmentation). The work was funded by the ERC SAHR project.

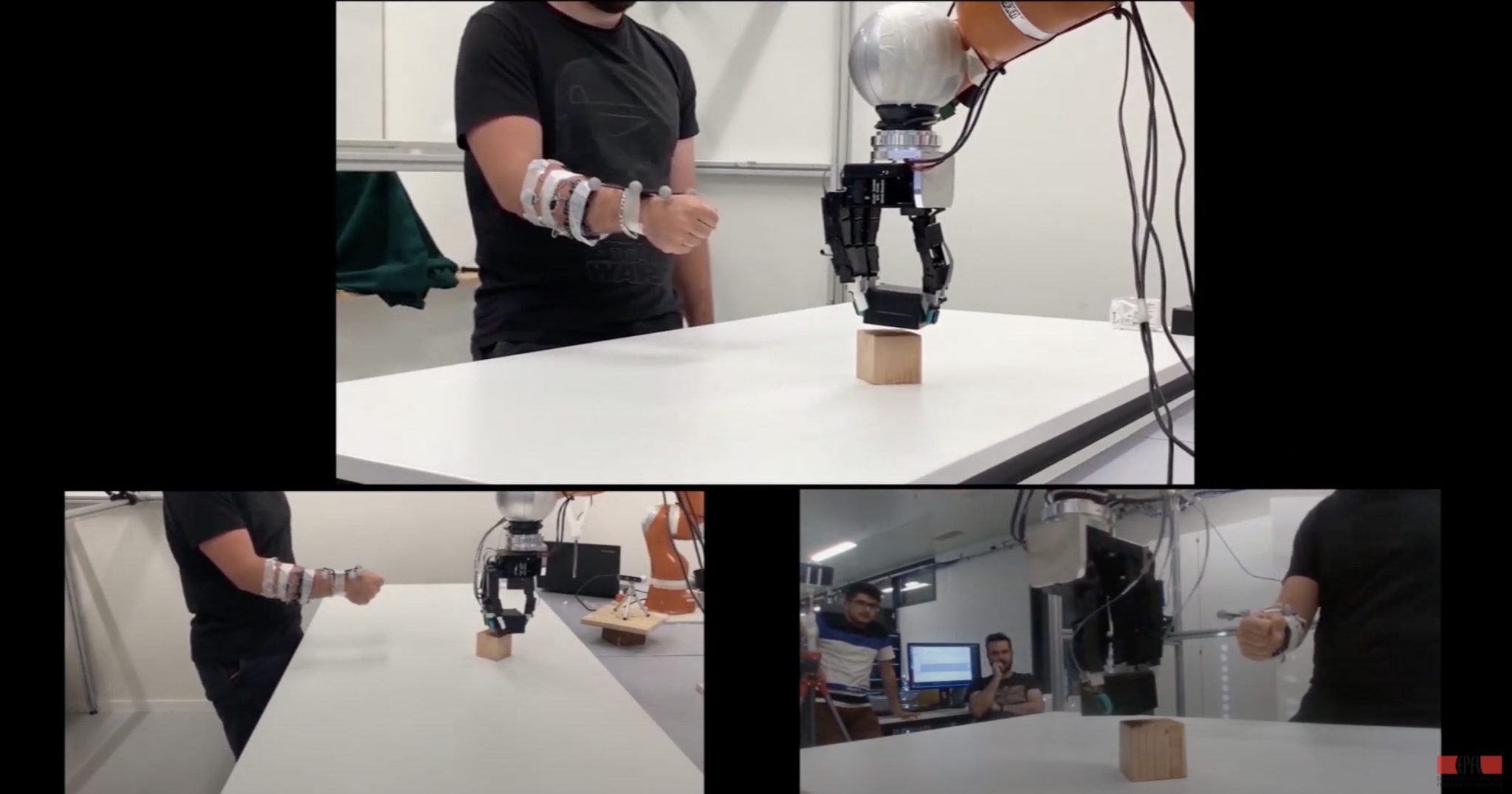

Audio and Tactile dataset of robot object manipulation with different material contents

The dataset captures the auditory and tactile signals of a Kuka IIWA robot with an Allegro hand holding a plastic container containing different materials. The robot manipulates the container with vertical shaking and rotation motions. The data consists of force/pressure measurements on the Allegro hand using a Tekscan tactile skin sensor, auditory signals from a microphone, and the joints data of the IIWA robot and the Allegro hand joints. The dataset is available on here DOI:10.5281/zenodo.6372437. The work was funded by the CORSMAL project and ERC SAHR project.

Haptic and motion dataset of human to human hand over objects with unknown content

The dataset contains measurements of a large set of dyadic interaction where two humans hand over to each glasses of different size with a content that is partially filled (e.g. glass half-filled with water or rice). We record . The work was funded by the CORSMAL and Secondhands project and was done in collaboration with the team of Prof. Tamim Asfour at KIT.