Society is rapidly opening its doors to robots in our daily life with autonomous vehicles, rehabilitation devices and autonomous appliances. These robots will face unexpected changes in their environment, to which they will have to react immediately and appropriately. Even though robots exceed largely humans’ precision and speed of computation, they are far from matching humans’ capacity to adapt rapidly to unexpected changes. The SAHR project aims at developing new control methods for robots that will enable them to learn gradually complex motor skills.

Approach

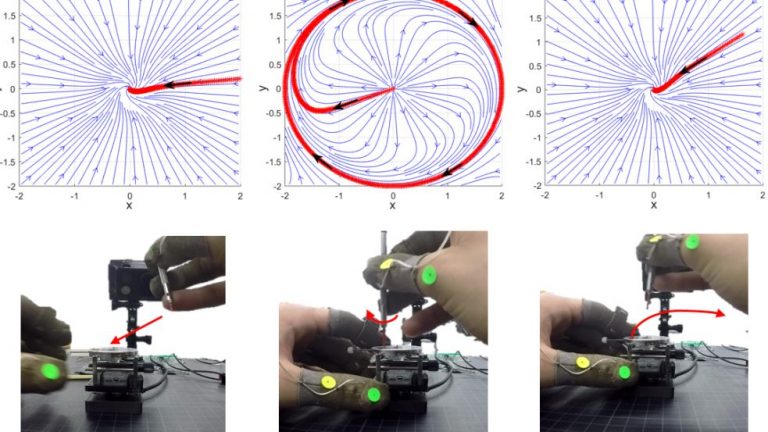

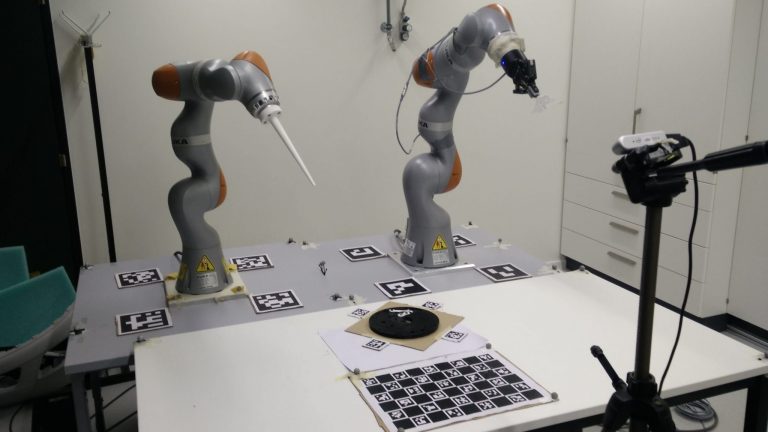

To ensure speeding response in the face of unexpected changes, we target controllers that can re-plan at run time and on-the-fly. We take a novel approach to robot learning that follows stages of skill acquisition in humans. To inform modelling, we conduct a longitudinal study of the acquisition of dexterous bimanual skills in watchmaking a unique craftsmanship. We study how humans exploit task uncertainty to overcome their sensory-motor noise, and how humans learn bimanual synergies to reduce the control variables. This study informs the design of novel learning strategies for robots that exploit failures as much as successes. We combine traditional optimal control approach with machine learning (ML) to learn feasible control laws, retrievable at run time with no need for further optimization. We exploit properties of dynamical systems (DS), which have received little attention in robot control, and use ML to identify characteristics of DS, in ways that were not explored to date. The approach is assessed in live demonstrations of coordinated adaptation of a multi-arm/hand robotic system engaged in a fast-paced industrial task, in the presence of humans. This approach has lead to the work listed bellow.