People detection and tracking in reactive robot navigation in crowds

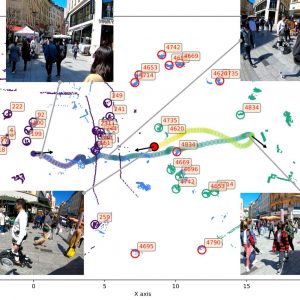

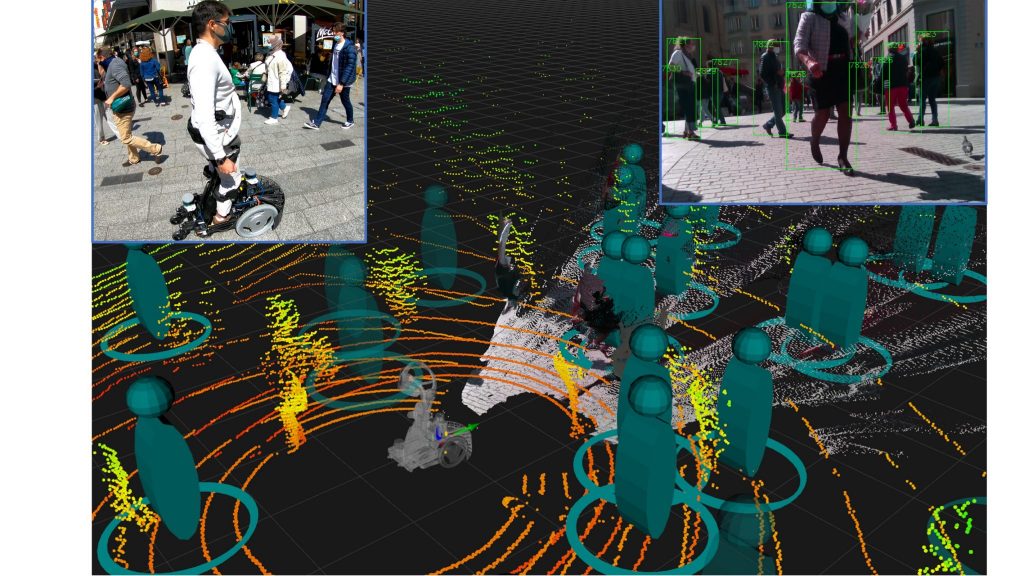

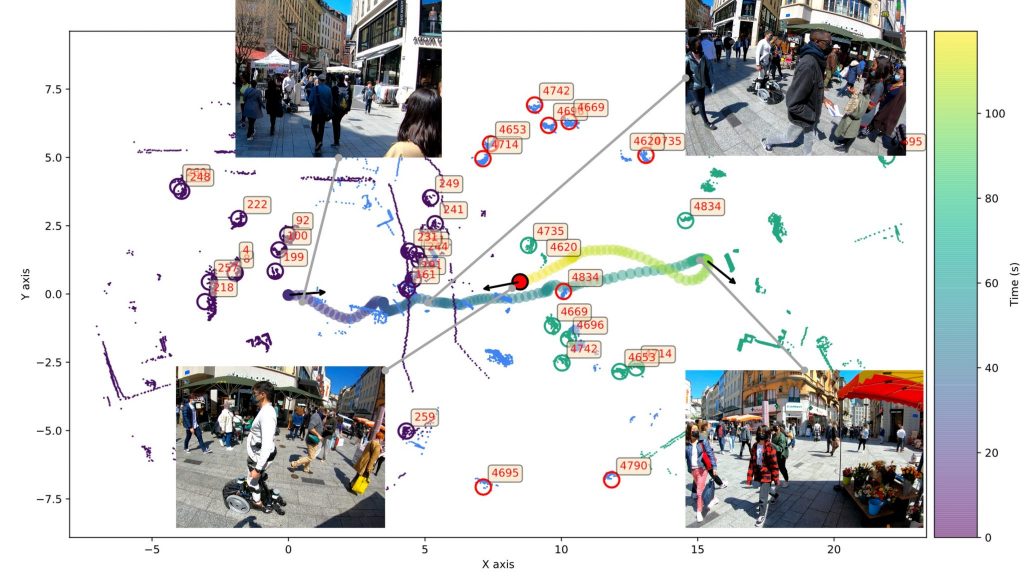

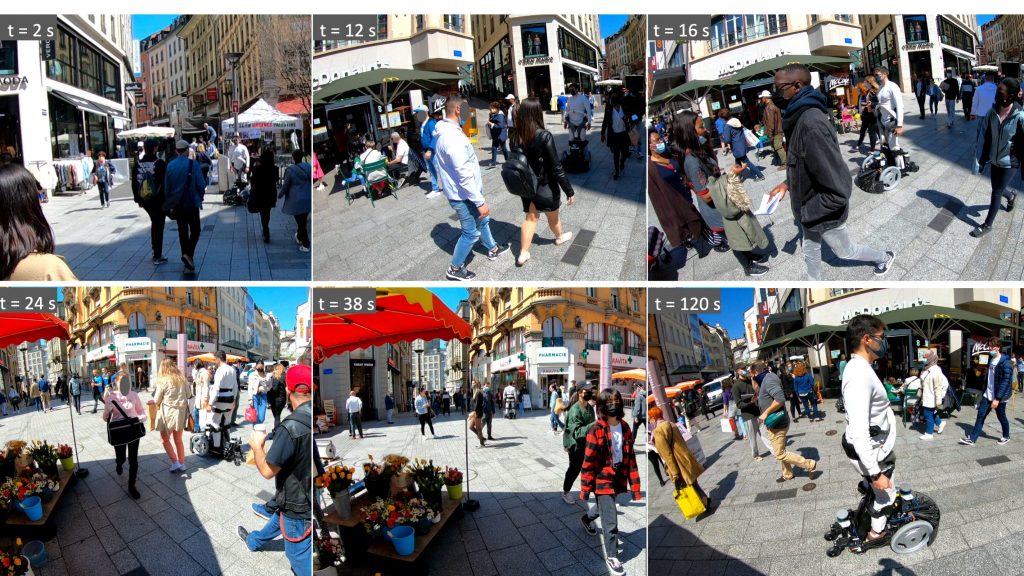

The current dataset – crowdbot – presents outdoor pedestrian tracking from onboard sensors on a personal mobility robot navigating in crowds. The robot Qolo [1,2], a personal mobility vehicle for people with lower-body impairments was equipped with a reactive navigation control operating in shared-control [3,6] or autonomous mode [4,5] when navigating on three different streets of the city of Lausanne, Switzerland during farmer’s market days and Christmas market. Full Dataset here: DOI:10.21227/ak77-d722

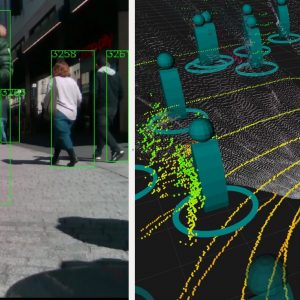

The dataset includes point clouds from a frontal and rear 3D LIDAR (Velodyne VLP-16) at 20 Hz, and a frontal facing RGBD camera (Real Sense D435). The data comprise over 250k frames of recordings in crowds from light densities of 0.1 ppsm to dense crowds of over 1.0 ppsm. We provide the robot state of pose, velocity, and contact sensing from Force/Torque sensor (Botasys Rokubi 2.0).

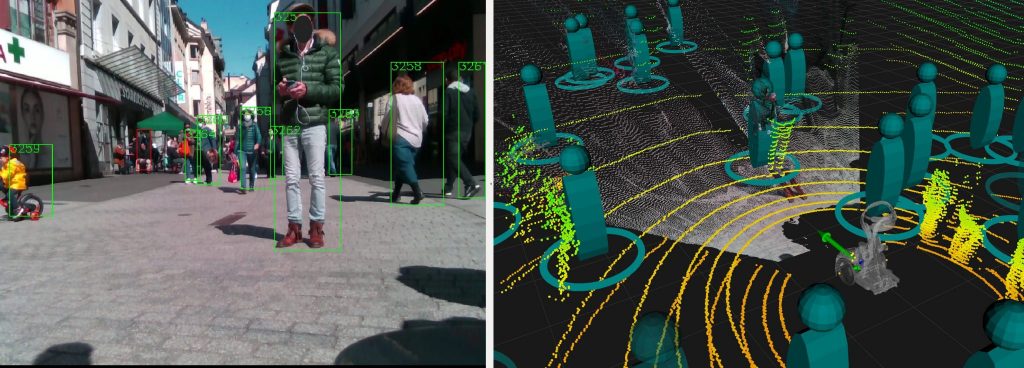

We provide the metadata of people detection and tracking from onboard real-time sensing (DrSPAAM detector [7]), people class labelled 3D point cloud (AB3DMOT [8]), estimated crowd density, proximity to the robot, and path efficiency of the robot controller (time to goal, path length, and virtual collisions).

One recording of the dataset includes approximately 120s of data in rosbag format for Qolo’s sensors, as well as, data in npy format for easy read and access. All code for meta data processing and extraction of the raw files is provided open access: epfl-lasa/crowdbot-evaluation-tools

- 250k frames of data – over 200 minutes of recordings

- Qolo Robot state: localization – pose – velocity – controller state

- 2 x 3D point-cloud (VLP-16)

- 1x RGBD camera (Realsense D435)

- 3 x people detectors (DrSPAAM, Yolo)

- 1x Tracker

- Contact Forces (Botasys Rokubi 2.0)

Cite as: Paez-Granados D., Hen Y., Gonon D., Huber L., & Billard A., (2021), “Close-proximity pedestrians’ detection and tracking from 3D point cloud and RGBD data in crowd navigation of a mobile service robot.”, Dec. 15, 2021. IEEE Dataport, doi: https://dx.doi.org/10.21227/ak77-d722.

References:

Qolo Robot:

[1] Paez-Granados, D., Hassan, M., Chen, Y., Kadone, H., & Suzuki, K. (2022). Personal Mobility with Synchronous Trunk-Knee Passive Exoskeleton: Optimizing Human-Robot Energy Transfer. IEEE/ASME Transactions on Mechatronics, 1(1), 1–12. https://doi.org/10.1109/TMECH.2021.3135453

[2] Paez-Granados, D. F., Kadone, H., & Suzuki, K. (2018). Unpowered Lower-Body Exoskeleton with Torso Lifting Mechanism for Supporting Sit-to-Stand Transitions. IEEE International Conference on Intelligent Robots and Systems, 2755–2761. https://doi.org/10.1109/IROS.2018.8594199

Reactive Navigation Controllers:

[3] Gonon, D. J., Paez-Granados, D., & Billard, A. (2021). Reactive Navigation in Crowds for Non-holonomic Robots with Convex Bounding Shape. IEEE Robotics and Automation Letters, 6(3), 4728–4735. https://doi.org/10.1109/LRA.2021.3068660

[4] Huber, L., Billard, A., & Slotine, J.-J. (2019). Avoidance of Convex and Concave Obstacles With Convergence Ensured Through Contraction. IEEE Robotics and Automation Letters, 4(2), 1462–1469. https://doi.org/10.1109/lra.2019.2893676

[5] Paez-Granados, D., Gupta, V., & Billard, A. (2021). Unfreezing Social Navigation : Dynamical Systems based Compliance for Contact Control in Robot Navigation. Robotics Science and Systems (RSS) – Workshop on Social Robot Navigation, 1(1), 1-4. http://infoscience.epfl.ch/record/287442?&ln=en. https://youtu.be/y7D-YeJ0mmg

Qolo shared control:

[6] Chen, Y., Paez-Granados, D., Kadone, H., & Suzuki, K. (2020). Control Interface for Hands-free Navigation of Standing Mobility Vehicles based on Upper-Body Natural Movements. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS-2020). https://doi.org/10.1109/IROS45743.2020.9340875

People Tracking:

[7] Jia D., Steinweg M. Hermans A., and Leibe B. Self-Supervised Person Detection in 2D Range Data using a Calibrated Camera. IEEE International Conference on Robotics and Automation (ICRA-2021)

[8] Weng X., Wang J., Held D., and Kitani K, AB3DMOT, A 3D Multi-Object tracking: A Baselne and new evaluation metrics. 2020.

Acknowledgment

We thank Prof. Kenji Suzuki from AI-Lab, University of Tsukuba, Japan for lending the robot Qolo used in these experiments and data collection.

This project was partially founded by the EU Horizon 2020 Project CROWDBOT (Grant No. 779942): http://crowdbot.eu