Michele Vidulis

About : Omnisens

About : Omnisens

Description

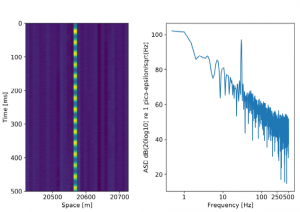

Omnisens is a worldwide active company in the field of fiber optic monitoring, based in Morges. The research and development team has recently added a novel instrument to the distributed fiber sensing interrogator family: the Distributed Acoustic Sensing (DAS) technology allows recording strain and temperature changes over large distances, guaranteeing continuous and reliable monitoring for energy industry assets. My work at Omnisens involved, in the first place, understanding the physics driving the instrument and proposing improvements to the existing data processing pipeline. At a later stage, I contributed to developing high-level algorithms acting on aggregated data, with the purpose of coding effective solutions for known asset monitoring test cases.

In the figure: a sinusoidal strain signal generated on the fiber optic used as a distributed sensor.

————————————————————————-

Evard Amandine

Description

Rollomatic SA is a private Swiss company based in Le Landeron and specialising in the design and manufacturing of high precision CNC machines for production grinding of cutting tools, cylindrical grinding, and laser cutting of ultra-hard materials.

The company develops its own innovative and powerful software that comes with the machine and is designed by its team of engineers.

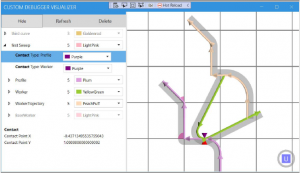

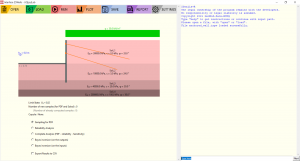

In this process, engineers manipulate virtual 3D objects to design tools of various shapes. When software engineers need to develop new operations for the machines, they need to be able to see the objects they are working with. My work here was essentially focused on creating a tool that helps developers debugging their code. The project, named Geometry Visualizer, enables software engineers to preview instances of 3D objects while debugging in Visual Studio.

I started by implementing a Debugging tool plugin for Visual Studio. It allows engineers to retrieve objects they want to visualize.

This capability is provided by Visual Studio and separated in two parts.

The debuggee, which represents the current debugging session and the 3D object being debugged altogether, and the debugger which eventually receives this object from the debuggee and takes care of any further operation. In our case the debugger sends the 3D object to a DataStore.

After that, I implemented the DataStore, an ASP.NET Console Application responsible to store and persist 3D objects for future use. I used a non-relational local database to store 3D object received from the debugged Visual Studio session.

Finally, the largest part of this project was to code the Client, a WPF .NET Application using the MVVM pattern, to display debugged objects. As shown in the image below, it’s able to display objects in a 3D viewer. All the important information about objects such as their position and length are directly visible upon click on the corresponding component. The Client retrieves the 3D object to be displayed by querying the DataStore through a POST request. The architecture of the code has been elaborated to be easily extendable to support new types of 3D object in the future. The final step of my project was to create an installer to make deployment of the software a breeze. To install the required components on their own machines, users just need to run the installer script.

Huy Thong

Description

The internship takes place at Laboratoire de recherche en neuroimagerie (LREN), CHUV. It consists of two projects.

The first project aims to build a framework to manage Magnetic-Resonance (MR) images in a data folder, calculate several metrics from them, as well as detecting and viewing outliers according to those metrics. The data is highly complex, as scans of each subject can vary greatly in terms of number of visits, number of measurements per visit, type of MRI maps obtained (and derived), etc…

The second project concerns the diagnosis of Alzheimer’s disease from MRI by deep learning, with a focus on the models’ bias towards age (False Negatives are young while False Positives are old). I developed a framework to efficiently preprocess MRI data, manage and merge the data from different datasets to form a richer dataset for model training/testing. With that, it is observed that a more concrete train/test data splitting approach can greatly boost the model accuracy to a comparable value to current literatures, even when using lower resolution data. This becomes the baseline model for future work.

I am thankful for the guidance and interesting introduction into the world of MRI

Shad Durussel

Internship at GeoMod: Risks and Reliability in Civil Engineering

GeoMod ingénieurs conseils SA is a small company based in Lausanne. They are active in the field of numerical modelling applied to civil engineering and especially to geomechanical problems.

The tasks I had during this internship revolved around stochastic and probabilistic methods applied to geotechnics. Indeed, in civil engineering, probabilistic considerations are often taken into account in the norms in the form of safety factors increasing actions or reducing stability of a system. Yet they are not often explicitly present in safety analysis or optimization processes.

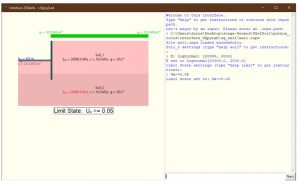

The tasks split between two main projects. The first one was to design and implement a graphical user interface for reliability analysis of deep excavations. The language used for this framework was Python and the software had to interact between the user and two main components of the analysis which were a probabilistic toolbox for the reliability analysis and a FEM software to solves the system.

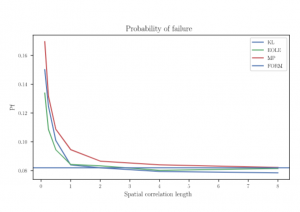

The second task was the study of the use of random fields in computational mechanics and their integration into existing methods. I relied on previous lectures followed at EPFL and existing literature to implement several discretization methods for random fields in Python. These random fields were used to simulate the variability of physical properties in soils.

As an example, the case of the risk of landslide on an infinite slope was studied and is shown in the next figure.

These random field discretization methods were also used along with FEM simulations in an attempt to reproduce the behaviour of complex and non-homogeneous materials such as rocks and concrete

Margherita Guido

About: https://us.pg.com

About: https://us.pg.com

Description

Procter&Gamble is the world’s largest consumer goods company, I did my internship in the R&D department of their German Innovation Center near Frankfurt am Main.

My project was about developing a model for film-adhesive-woven fabric laminates, with the aim to define the best combination of film and adhesives in the current portfolio of the Company and guide the development of lab test that would predict the failure in the context of hygiene products usage. I had a lot of responsibilities since the very beginning, indeed the whole project organization and development was all assigned to myself, this was really challenging but I had great supervision during the whole project, and it gave me the possibility to learn a lot not only on the technical side but also on professional and personal aspects and how they can be leveraged at the best when working in a complex environment. In the development of the project I had the chance to learn the Finite Element commercial software ABAQUS, attending a professional training about it. Moreover, I appreciated the opportunity of conducting laboratory experiments, under the guidance of expert scientists.

Damien Ronssin

Description

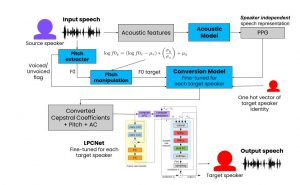

Logitech is a consumer electronics company based in EPFL innovation park. Their main products are computer peripherals such as mice, keyboards, cameras… During my internship there, I worked on designing an algorithm able to perform voice conversion in real time. Voice conversion consists in transforming a speech audio signal so that the voice of the original speaker is changed into the one of a target speaker, while keeping the linguistic content untouched. The best voice conversion methods are non-causal, they require a very large future look-ahead which makes those unusable in real time. My project was to design a voice conversion system with small future look-ahead and thus small latency. I managed to obtain a working algorithm with a future lookahead of 60ms, it is small enough for this system to be used on online audio chats for example.

The complete system is composed of 3 different neural networks. The first (Acoustic Model) extracts phonetic information from the input speech. The second (Conversion Model) obtains some converted acoustic features from the previously computed phonetic embeddings. And the last (Vocoder) finally synthesizes the output speech.

Thomas Fry

Description

DomoSafety mission is to offer a preventive and emergency alerting service for elderly people. This service informs the family and, or, the health care professionals (nurses, doctors) when early signs of fragility or an urgent incident has been detected. Motion detectors are installed in the different locations of the flat/house, along with a a detector of door opening.

My role as an intern was to produce a score of socialization, in order to monitor the time of each beneficiary in interactions with other people. I also contributed to an email service, in order to automatically send the daily information of the score to the beneficiary.

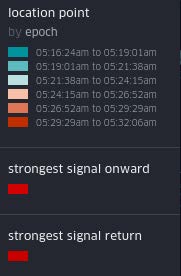

To build such a score, three components are gathered: the weighted time spent with visits at home, the time spent outside and the number of outings. The time outside and the number of outings are easily deduced from the motion detectors and door captor: the door has been open, then closed and no motion is detected until the door is open again. However there is no simple metric for detecting visit. A prediction model was built to predict whether there is a visit in the installation, this model was trained upon data gathered from 20 installations between 2017 and 2018. This model was tested and improved until validation with other data. Below are illustrated the visit detection during a week, and the production of the score with its components other different periods of time for an anonymous installation.

Louise Aubet

Description

The internship was carried out at Empa, the Swiss Federal Laboratory for Materials Science and Technology. It is an interdisciplinary research institute for applied materials sciences and technology. I was working in the Laboratory for Air Pollution and Environmental Technology, in the research group on Atmospheric Modelling and Remote Sensing.

The project was focusing on the evaluation of air quality simulations over Europe using an atmospheric chemical transport model system called ICON-ART. This model has already been used for global applications but not for regional domains, therefore the aim of this project was to assess its performance in this configuration. I assembled the processing chain to run air quality simulation, including the integration of a set of chemical tracers using MOZART-4 chemical scheme and the addition of online anthropogenic and biogenic emissions. The simulations were run using CSCS (Swiss National Supercomputing Centre) computational resources. The outputs were compared with results from previous model COSMO-ART and measurements.

First results are very promising, as it can be observed that ICON-ART results correspond well to measurements, especially during low concentration periods. ICON-ART has more difficulties capturing high pollution episodes but several potential solutions were presented. Furthermore, ICON-ART results are very similar to previous COSMO-ART results, and the agreement with measurements is better in some cases, such as concentrations at the location of Zürich. Vertical profiles from ICON-ART results are consistent with those from MOZART-4 model.

Caroline Violot

Description

The goal of my internship at armasuisse was to have a better understanding of the privacy risks and the benefits related to the use of contact tracing applications. SwissCovid as many other contact tracing application was built upon the Exposure notification (GAEN) API [6] developed by Google and Apple in their historical collaboration of 2020, which was unfortunately not open source.

First, I spent 5 days in the 5 largest cities in Switzerland (Lausanne, Geneva, Zurich, Basel and Bern), to collect WiFi, Bluetooth and Bluetooth Low Energy (BLE) signals, using a slightly modified version of an application called Wigle [3]. Then I analyzed the obtained dataset, searching for group privacy threats on one hand and exploitable anomalies on the other hand.

The data collection was meant to reflect what some attackers might be able to do, the locations were all public ones and the only needed material was a regular smartphone with a slightly modified open-source application. This minimalist set-up still allowed me to develop a better understanding of the populations found in the different locations, to determine the best place to collect the largest number of SwissCovid signals for some potential attacks, to find a location privacy threat in the train, by being able to locate the SwissCovid users among the passengers, and to find some incoherence between the GAEN API documentation and the actual collected data. Indeed, a comparison between the GAEN documentation and the obtained data indicated that some privacy claims were not respected, at least not for all types of device.

To understand the discoveries made, the general operation of tracing applications must be understood. The general functioning is that every 24 hour a Temporary Exposure Key (TEK) is generated on the device and stored for 14 days. Then the Rolling Proximity Identifier Key (RPIK) is derived from the TEK and is later used in order to derive the Rolling Proximity Identifiers (RPIs). Those RPIs together with some associated Encrypted Metadata (AEM) are then broadcast in BLE packets. When a device owner is diagnosed as positive for the coronavirus, the subset of TEKs concerning the time window in which he/she could have been exposing other users are uploaded to the Diagnosis Server and are called the diagnosis keys. The diagnosis keys allow in turn to derive all RPIs broadcasted during the corresponding time window. Other users devices regularly download the diagnosis keys, derive the corresponding RPIs and compare them with the RPIs they stored upon encountering them. If a match is found, they can decrypt the AEM, which encodes the transmitting power, used along with the received signal intensity and a calibration process to evaluate the attenuation of the signals, translating it to a distance and therefore estimate the risks associated with the encounter.

With this in mind, the following discoveries were made : the time before RPI change could go way above the normal rotation period, the RPI change was not always synchronized with the MAC address change, the type of MAC address was not always of type private random non-resolvable, and a significant number of MAC addresses were used for GAEN signals as well as for non GAEN signals, which was in total contradiction with the privacy claims of the GAEN

Figure 1: Train experiment data, represented using kepler.gl. The experiment consisted of going from one end of the train to the other and back, at constant speed, with a two minutes interruption at the end of the train. The MAC addresses that were seen in both passages are highlighted in red and are matched with each other by the white arcs. By walking into a whole train twice, we have been able to show visually that this environment allows us to determine the positions of the emitting devices by observing the symmetry between the forward and return positions.

Daniele Hamm

Description

Empa (the Swiss Federal Laboratories for Materials Science and Technology) is an interdisciplinary research institute belonging to the ETH domain. Its 4 numerous laboratories conduct cutting-edge research, driving materials, and technology innovation. During my internship, I participated in the research carried out by the Laboratory for Advanced Materials Processing, which is currently dedicated to the study of laser processing.

Our project focused on laser welding, which is a technique exploiting a laser beam to join pieces of some material. The welding is achieved because the concentrated heat provided by the laser melts the pieces, which then join together when they solidify. In this project, we explored the possibility of obtaining a physically informed digital twin of laser welding. Such a tool would be of great practical use since it would allow to easily tune the pro- cessing conditions leading to a targeted quality of the processed materials. Our project represented the rst step towards this ambitious goal.

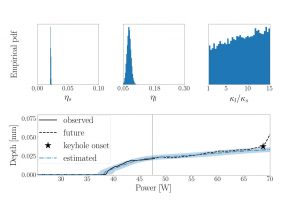

One important limiting factor in the development of a digital twin of laser processing is that some physical properties are missing. We, therefore, built a framework combining physical modelling, machine learning, and exper- imental data, to infer the values of the missing properties allowing us to reproduce the observed data with the proposed physical model. We are thus confronted with the inverse problem of recovering the parameters of a PDE (partial dierential equation), given the observed experimental output. Fo- cusing on the Titanium alloy Ti6Al4V, we tried to reproduce a benchmark experiment tting a set of model parameters. Our promising results sug- gest that the physical model can accurately reproduce experimental results if carefully tuned, as we show in Figure 1.

Figure 1: Bottom plot: in black, the experimentally measured evolution of the melt pool depth as the laser power is increased. The observed data (solid black line) are used to t the missing physical properties s; l; l=s, which leads to the estimated distributions shown in the three upper plots. These estimates are then used to predict the estimated evolution of the melt pool depth during the whole experiment, providing suitable condence intervals (dash-dotted blue line and shaded areas). We can thus appreciate the predictive power of the tted physical model, which reproduces accurately both the observed data and the future data left for testing.

Igor Abramov

Description

The internship was carried out at AutoForm – leading provider of software for product manufacturability, tool and material cost calculation, die face design and virtual stamping as well as BiW assembly process optimization. Over the last twenty years, AutoForm’s innovations have repeatedly revolutionized the die-making and sheet metal forming industries.

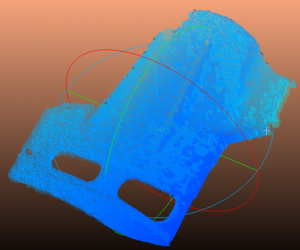

As an intern in AutoForm I was working in a research and development department. The main goals of the R&D department are development of new and advanced CAD/CAE applications, exploration of innovative technologies to improve quality of obtained results and simplify manufacturing process. The project I was involved in was aiming to explore possibilities and precisions of modern depth and lidar cameras as well as possible ways to use them to reconstruct 3D beads and part boundaries from images with depth maps.

My work during the project can be divided into several stages:

– Analysis of information on available hardware and software for depth data collection and processing. The main result of this step – choice of the most suitable equipment for the project.

– Generation of synthetic depth data and acquaintance with existing depth data processing libraries. The main aim on this step was to get familiar with the Point Cloud Library with an opportunity to generate all necessary data without any noise.

– Exploration of existing open-source projects related to depth data registration and processing. As data about camera position was too noisy it was necessary to review possible ways to combine obtained depth images to reconstruct specific geometry features.

– Organization of series of experiments to find optimal settings for depth and lidar cameras to achieve the best depth measurement accuracy.

– Development of RGB-D data processing pipeline for specific use cases.

Jonas Morin

Description

This internship was carried at GeoMod ingénieurs conseils SA, a company that provides counselling and analysis in the field of control, optimisation and numerical modelling of complex civil and environmental engineering structures. It uses a nonlinear finite-element software, ZSoil[1], to provide in-depth analysis of the behaviour of the soil and engineering structures.

The main focus of the internship was to create an interface that provided ZSoil with multiple tools from the probability toolbox UQLab[2] and presented them in a way such that civil engineers with no programming background could understand. Among those tools, sensitivity and reliability analysis through Monte-Carlo simulations, Bayesian Inversions and Sobol analysis with PCE meta-modeling were provided.

A preliminary work on attributing the parameters of the soil with random fields was also done as a first approach to an even better modelling of the behaviour of the soils. These probabilistic approach showed promising results in the geotechnics field.

[1]ZSoil.PC User Manual (2020). Rock, Soil and Structural Mechanics in dry or partially saturated media. ZACE Services Ltd, Software Engineering, Lausanne, Switzerland, 1985-2020.

[2]Marelli S., Sudret B. (2014). UQLab: A Framework for Uncertainty Quantification in MATLAB, in The 2nd International Conference on Vulnerability and Risk Analysis and Management (ICVRAM 2014), University of Liverpool, United Kingdom, July 13-16, pp. 2554-2563, 2014.

Sofia Dandjee

Description

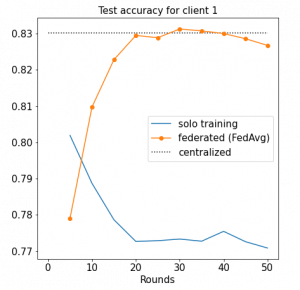

Inpher is a company that specialises in privacy-preserving data analytics such as multiparty computation and homomorphic encryption. The company employs about 30 people and has offices at the Innovation Park in Lausanne for the engineering team – and in the US for the business team. The goal of my internship was to analyse and improve machine learning algorithms in the context of Federated Learning (FL). FL is a decentralised training strategy where clients who have sensitive and private data collaborate to train a single global model without sharing their data. Contrary to centralised training, where data is assumed to be IID (independently and identically distributed), this is unlikely to hold for FL in practice. Indeed, the data distribution differ from one client to another.

I implemented several state-of-the-art federated optimisers and applied them to recurrent neural networks for sentiment analysis and next character prediction. I spent most of my time conducting experiments to tune the parameters of the federated algorithms so that they perform as well as centralised training. The benefits of federated learning (training with all of the clients’ data) over solo training (training with only one client’s data) is shown in the figure below. Finally, I designed a server-client framework that was integrated into the company’s product. Despite working remotely most of the times, I enjoyed presenting my work to my colleagues and taking part in regular brainstorming meetings. This internship was an opportunity for me to sharpen my coding skills (in Python and Tensorflow) and gain professional experience in a software company.

Nataliia Molchanova

Description

The Campus Biotech in Geneva houses neuroscience laboratories from the Lausanne institute of technology (EPFL) and from the University of Geneva. Within this framework, the electroencephalography and brain computer interface facility (EEG-BCI facility) of the campus provides state-of-the-art equipment, technology and expertise to the housed labs.

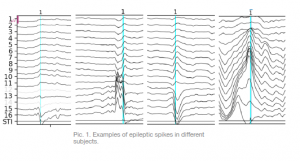

Current project was requested by the laboratory from University of Geneva that conduct research on epilepsy. Electroencephalography (EEG) is widely used in this research. However, manual annotation of hourly EEG recordings by specialists is very time consuming. For this reason, laboratory have requested EEG-BCI facility to provide an automated pipeline for detection of EEG events, such as interictal epileptic spikes.

Resent research shows that machine learning algorithms outperform conventional methods of epileptic spikes detection. For that reason during the internship different machine learning algorithms were investigated in application to this task. Important part of this project was dedicated to signal processing in order to approach low SNR issue, remove subject specific features of recordings, find a better representation of spikes and extract features that would be meaningful for machine learning classifiers.

Huajian Qiu

Description

ABB is a Swedish-Swiss multinational corporation headquartered in Zurich, Switzerland, operating mainly in four business areas: Electrification, Robotics & Discrete Automation, Process Automation and Motion. The internship took place in the group “Industrial Software Systems” in the ABB Corporate Research Center in Baden-Dättwil, Switzerland, which creates power and automation technologies. I joined a team consisting of several senior researchers and a few interns. We worked closely with each other on a routine of monthly sprints and daily standup meetings. The team encouraged cooperation and has a pleasant, open-minded atmosphere.

The objective of the team is to do a research project about virtualized real-time software. Real-time software is a time-bounded application with well-defined, fixed-time constraints. Processing must be finished within the defined constraints in each cycle or the application will fail. In computing, virtualization is a process that adding an additional layer of abstraction on top of an operating system or hardware. Although virtualisation has many benefits such as isolation between applications, easy deployment, it introduces additional overhead and reduces the timing performance of the real-time application. So careful choice of virtualisation technology, implementation of supporting utilities and evaluations of different system setups are critical for our project.

Based on this context, my field of action included the following tasks:

- Implementing tools and support utilities for a software testbed in Python, including front-end parts in JavaScript.

- Improving the testbed’s robustness by remodelling the complex error handling scheme

- Evaluating the performance of virtualised real-time software under different conditions

- Presenting results to research and business colleagues

Overall, this is a pleasant and enjoyable experience. I got encouraging, immersed professional and personal growth from this internship. I definitely recommend others to join ABB for internship or thesis.

Sergei Kliavinek

Description

From July to October I did an internship at CSCS (Swiss National Supercomputer Centre) in the ReFrame team. ReFrame is a framework for writing regression tests, making life much easier for users when developing such tests. During the internship, hard skills such as programming skills, code reviews, documentation can be significantly improved. Additionally, deep knowledge of regression testing, ReFrame package and soft skills such as working in an innovative IT environment, the procedure of group project development, interaction with colleagues, presentation of work done can be obtained. Teamwork was wonderful. I was worried that working in the industry would put a lot of pressure on me. As it turned out, it was just the opposite. My mentors were supportive, helpful, and on the whole created an extremely pleasant working environment. Despite the fact that most of the internship was online, the work was fruitful. I was able to go to Ticino where the company is located twice for a few days, meet the team, and have a great time. The organization itself is very well organised, provides support with all the necessary applications and helped me get up and running very quickly. I highly recommend an internship at CSCS, you will gain invaluable experience and work with wonderful people.

Louis Jaugey

Description

Lyncée Tec is the leading company in Digital Holographic Microscopy (DHM), a technology developed at EPFL. This microscopy technique allows to change the focus of an image as a post-processing step, providing a 3D information (of light’s intensity and phase) from an initial 2D image (hologram). It can be used in reflection or transmission mode, leading to application ranging from surface analysis and MEMS (microelectromechanical systems) to biology.

During my internship, I had to prototype a 4D (space + time) tracking algorithm for “micro-swimmers” (such as some types of bacteria). DHM allows non-invasive observation of living micro-organisms, which means that there is no need to use a contrast agent or a high energy laser. This aspect makes it particularly well-suited for the study of micro-swimmers motility, since they can freely evolve as they would in their natural environment. Having a fully automated tracking algorithm is paramount in such applications. The main challenges of the tracking are: the segmentation of the different objects, the search for the best focus and the tracking itself.

I had the opportunity to work in a small and multi-disciplinary team with very agreeable people. Each member of the team has its expertise domain and they were all open to answering my questions.