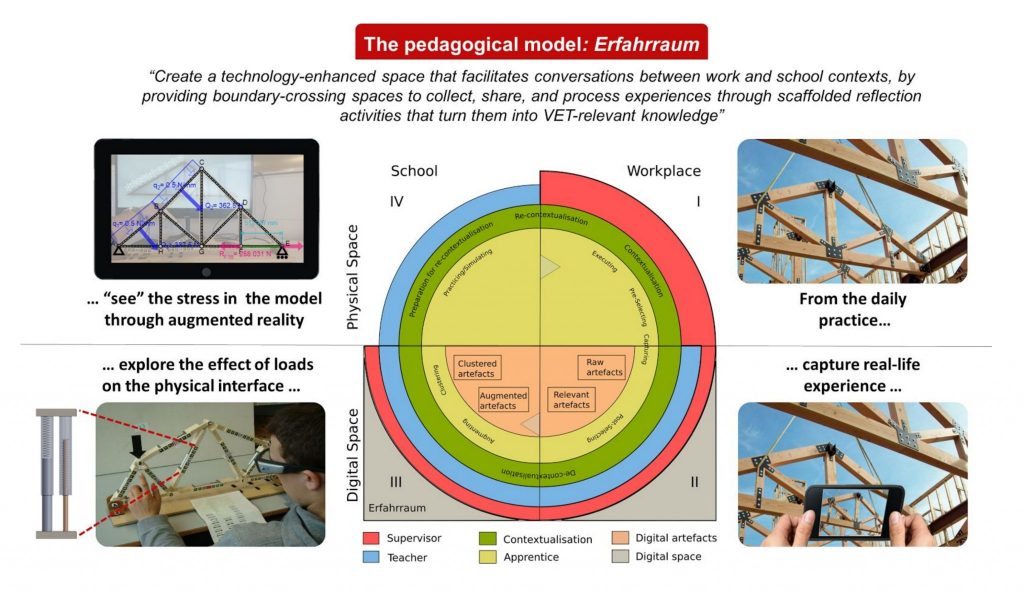

The fundamentals of statics are a well-known part of the STEM curricula, but it is also crucial for many crafts, such as carpentry, metal works or construction workers, although the required mastery is supposed to be mostly qualitative. In the context of vocational training, how can we help apprentices acquiring such skills without the burden of physics equations and mathematics? We believe that exploring such concepts through hands-on activities is the key: the project aims at developing a tangible user interface which allows the students to make sense of statics by manipulating models of common structures (roof, house frame, etc.) and inquiring into the behaviour of the structural elements when loads are applied.

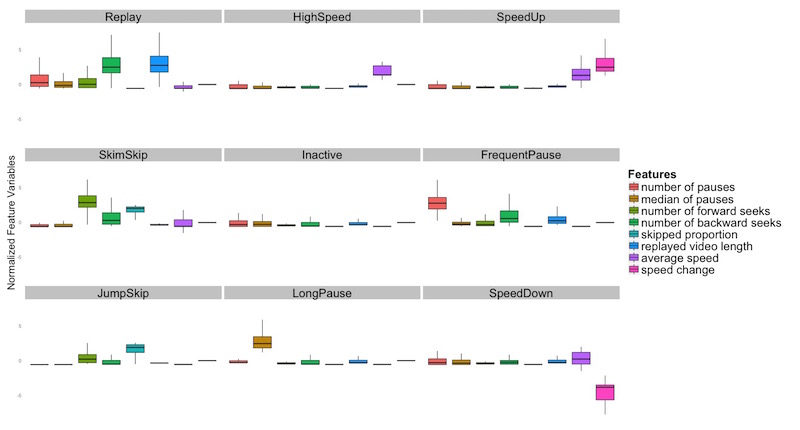

Video watching is the central activity in MOOC learning, so it is of great relevance for instructors to understand how students interact with videos as well as how they perceive them. Existing MOOC research literature lacks click-level video analysis in this regard.

Our research aims to fill the gap by providing an empirical investigation with two research questions: (1) Do in-video interactions reflect students’ perceived video difficulty? (2) If yes, then how is the perceived difficulty related to different types and intensities of interactions?

MOOC Video Interaction Patterns: What Do They Tell Us?

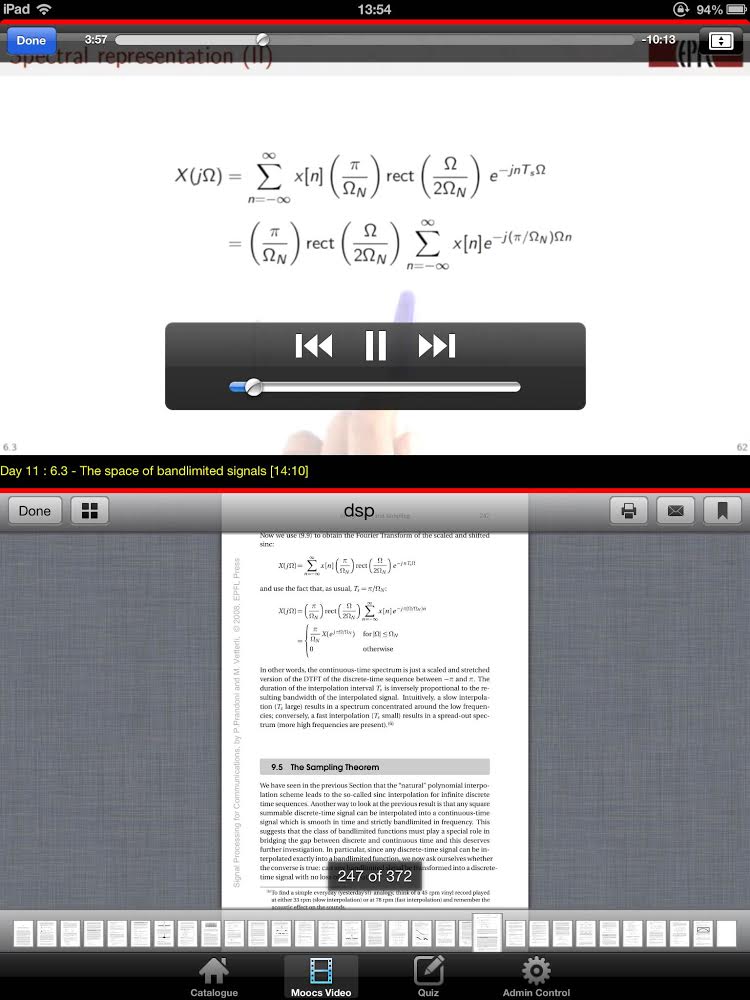

2015. 10th European Conference on Technology Enhanced Learning, Toledo, Spain, 15-18, 2015.When someone attends a lecture in classroom, sometimes he/she may not be able to follow what the teacher has said. What is even worse, without understanding it, one would feel even more difficult to understand the following content. Usually a student probably either searches for information in the textbook or interrupts his/her neighbor for a discussion. This is not a good practice either, because one would miss more live content from the teacher.

With MOOC, can we change this situation? Students have lecture videos that allow them to pause and rewind easily. However, what is missing from our above scenario is helpful information support from fellow students or textbook for clearance of the video. Our research is built upon this motivation. We built a MOOC-BOOK player which links text-book content with MOOC videos automatically to help them to study in groups.

Augmenting Collaborative MOOC Video Viewing with Synchronized Textbook

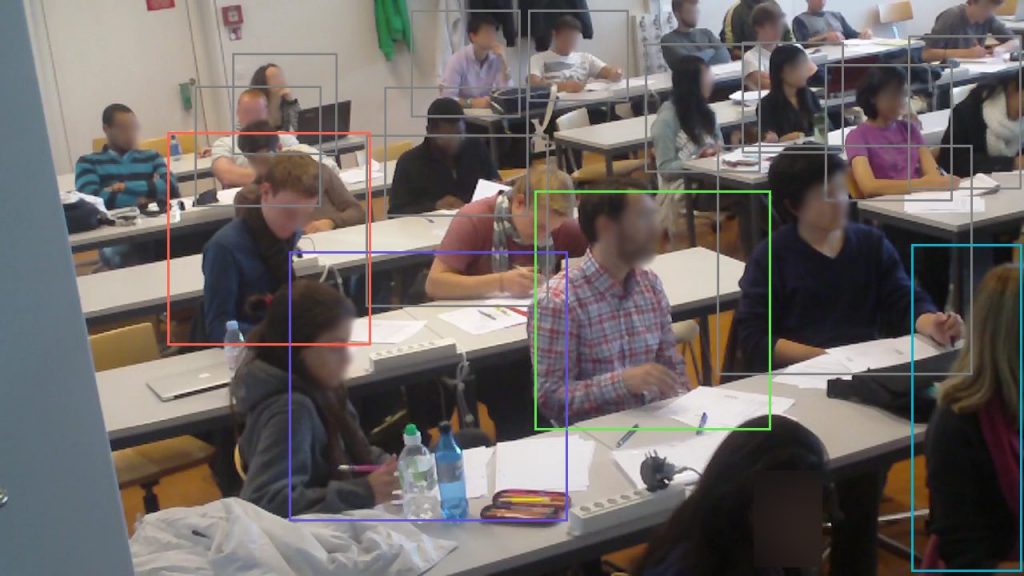

2015. 15th IFIP TC.13 International Conference on Human-Computer Interaction (INTERACT), Bamberg, GERMANY, SEP 14-18, 2015. p. 81-88. DOI : 10.1007/978-3-319-22668-2_7.The aim of this project is to give feedback to the teachers without interfering with their lecture. We use a set of computer vision technologies to analyise how the students are reacting to the lecture and to help teachers find weak points in their presentation. By using a system of cameras, we observe how the students are moving, where are they looking and we try to represent the information to the teacher in order to make reflection-in-action and reflection-on-action easier.

Camera-based estimation of student’s attention in class

Lausanne, EPFL, 2015.Despite multiple efforts directed at making schools all-digital, paper is still far from disappearing from our classrooms. This is especially true in primary schools, as many parents and teachers consider that paper-based skills like handwriting should still have a prominent role in the education of children. Paper is tangible, cheap, familiar, reliable, easy to carry around, so… how can we leverage this and integrate paper with digital technologies, complementing each other’s strengths? The Ladybug game is a proof-of-concept of such paper-based user interfaces for primary school maths.

Learning technologies are still underused in our classrooms, mostly because they are not easy to scale and integrate (or, as some researchers put it, ‘orchestrate’) in everyday classroom conditions. In this project we study in detail the effort and actions of a teacher coordinating learning activities in the face-to-face classroom, using sensors such as mobile eye-trackers. This can help us understand what parts of the orchestration need more support, and teachers can use visualizations of this information for their own reflection too, as a sort of “Classroom Mirror”.

Lantern is a small portable device which consists of five pairs of LEDs installed on a stub-shape PCB covered by a blurry plastic cylinder, and one microprocessor to control the LEDs. Each Lantern shows the status of one collaborator. The user can turn and press the Lantern, which respectively result in changing color and switching blinking mode. Every user interaction is recorded and can be transmitted through the USB port for offline analysis.

An Ambient Awareness Tool for Supporting Supervised Collaborative Problem Solving

Ieee Transactions On Learning Technologies. 2012. Vol. 5, num. 3, p. 264-274. DOI : 10.1109/Tlt.2012.7.Reflect is an interactive meeting table that monitors the conversation taking place around it via a thee-microphone beamforming array, and uses a matrix of 8×16 multi-color LEDs to display information in different forms. We studied two versions of the table. The first shows the amount of speech of each participant, and our studies showed that, under certain conditions, participants become more balanced in terms of how much they speak when their participation levels are displayed on the surface of the table. This is beneficial for certain situations where balance in participation is necessary for good collaboration to take place, such as situations of collaborative learning where all learners need to participate more or less equally to improve learning gains for the group.

Augmenting Face-to-Face Collaboration with Low-Resolution Semi-Ambient Feedback

Lausanne, EPFL, 2010.The Tinker table is a tabletop learning environment which allows apprentices to build small-scale models of a warehouse using physical objects like shelves, docks and rooms as well as pillars. It consists of a projector and camera mounted in a metal casing which is suspended above a regular classroom table by an aluminium gooseneck. Shelves, pillars and docks are scaled at 1:48. The purpose of the camera is to track the position of objects on the table and transfer this information to a computer running a logistics simulation. The position of the object is obtained thanks to fiducial markers. The projector is used to project information on the table and on top of the objects, indicating for example the accessibility of the content of each shelf or security zones around obstacles.

We conducted a content analysis of online forums about Roomba, AIBO, and the iPad to examine the usage and context of anthropomorphic language when writing about different types of technologies/robots. We investigated topics of interest to robot owners and how various language cues were used to relate to different kinds of interactive devices. The study revealed that anthropomorphism seems to be related to the shape, functionality, and interaction possibilities of a device, as well as to the topic of the conversation.Content that will be toggled here

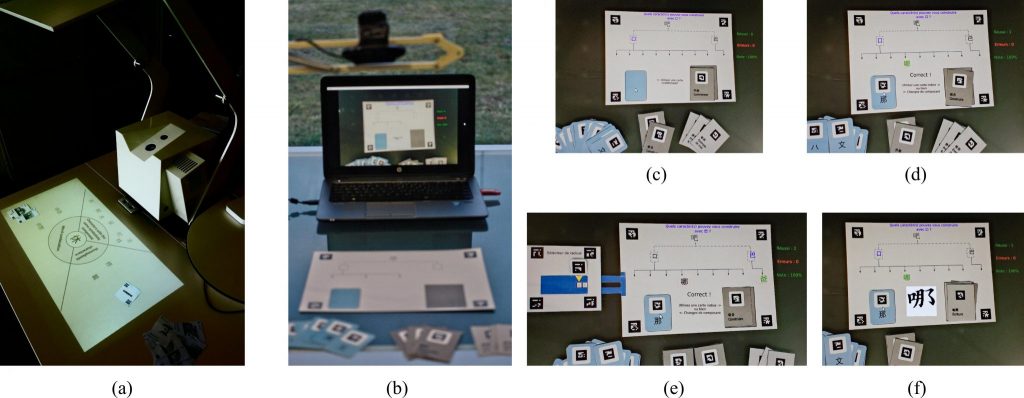

Most technologies designed for language learning in the classroom, especially to improve vocabulary learning, often consider only individual learning or disregard formal classroom learning. Hanzible is a paper-based tangible application to aid in collaborative Chinese character learning in classroom, which is specifically designed to enable exploration of the different aspects of Chinese characters (calligraphic stroke order, sound, structure, latin transcription), as well as to foster discussion among students.

The source code for the Hanzible prototype can be found in github.

GroundIt and InstaFeedback are solutions that help larger teams to increase their mutual knowledge in real-time while in a meeting or a presentation.

These solutions were created to tackle the lack of mutual knowledge awareness in group collaboration, which is detrimental to achieving the desired outcomes and successfully completing given projects.

Conceptually, GroundIt was loosely built on the theory of Grounding by Clark. To ensure the use of the technology is appropriate for the type of meeting people have, two evolutions of this system (InstaFeedback and CooPilot) have been built.

InstaFeedback is a tool that redesigns the notion of questionnaire in a novel way, enabling meetings with larger number of attendants or asymmetric participation to benefit from mutual knowledge awareness. It provides short questions that people answer on the spot, providing anonymous real-time (instant) feedback of all the responses and generated content to everyone.

CooPilot is a joint research effort of EPFL and UNIL in Switzerland, aimed at improving meetings by increasing the awareness of mutual knowledge. Teams or task forces in companies or organizations benefit most from the value of CooPilot when using it in their regular meetings (weekly, monthly, etc.)

In 2011, we carried out a 6-month ethnographic study with nine households to which we gave a domestic service robot (a Roomba vacuum cleaning robot). We studied people’s perception of the robot, and how usage changed over time. We also investigated (social) dynamics between the robot, members of the household, other cleaning tools, and the environment. Finally, we identified several factors that impact the acceptance and process of adoption of robots in homes.

The study contributes to a general understanding of (long-term) HRI in homes and can serve as a basis to make suggestions for the design of domestic robots.

Collaborators: Julia Fink, Valérie Bauwens, Frédéric Kaplan, Pierre Dillenbourg

Our acknowledgments go to all the families and households that particpated in the study and to iRobot Switzerland for the discount of the Roombas.

Publications and Presentations:

- F. Vaussard, J. Fink, V. Bauwens, P. Rétornaz, D. Hamel, P. Dillenbourg and F. Mondada. Lessons Learned from Robotic Vacuum Cleaners Entering in the Home Ecosystem. Robotics and Autonomous Systems, 2013. (accepted for publication)

- J. Fink, V. Bauwens, F. Kaplan and P. Dillenbourg. Living With a Vacuum-Cleaning Robot. A 6-month Ethnographic Study. International Journal of Social Robotics, vol. 5, num. 3, 2013, pp. 389-408, Springer

- V. Bauwens and J. Fink. Will Your Household Adopt Your New Robot?. Interactions, vol. 19, num. 2, March + April 2012, pp. 60-64.

- J. Fink, V. Bauwens. A Robot at Home? People’s Perception of a Domestic Service Robot. Invited Talk at international workshop “Bridging the Robotics Gap – Bringing Together Ethicists and Engineers”, Enschede, The Netherlands, July 11-12, 2011.

- J. Fink, V. Bauwens, O. Mubin, F. Kaplan and P. Dillenbourg. HRI in the home: A Longitudinal Ethnographic Study with Roomba. Poster at the 1st Symposium of the NCCR robotics, ETH Zürich, Zurich, Switzerland, June 16, 2011.

-

J. Fink, V. Bauwens, O. Mubin, F. Kaplan and P. Dillenbourg. People’s Perception of Domestic Service Robots: Same Household, Same Opinion?. Proceedings of the 3rd International Conference on Social Robotics, ICSR 2011, Amsterdam, The Netherlands, November 24-25, 2011, LNCS 7072, Springer, pp. 204-213.

In a joint project with the Biorobotics Laboratory (BIOROB) at EPFL, we assisted in the evaluation of a graphical user interface to arrange adaptive furniture composed of modular robots (“Roombots“). For more information about the roombots modular robots, please visit the respective project web page: Mobile control interface for modular robots

Publications and Presentations:

- S. Bonardi, J. Blatter, J. Fink, R. Möckel, P. Jermann, P. Dillenbourg, A. Ijspeert. Design and Evaluation of a Graphical iPad Application for Arranging Adaptive Furniture. Proceedings of the 21st IEEE International Symposium on Robot and Human Interactive Communication, Ro-MAN 2012, Paris, France, September 9-13, 2012 (link)

“Ranger” tries to motivate children to tidy up their toys

In 2012, we conducted a series of Wizard-of-Oz field experiments with 14 families to evaluate a robot prototype for home use. The robotic box “Ranger” is developed in collaboration with the Robotic Systems Laboratory (LSRO) at EPFL, and made for young children to encourage them to tidy up their room. Some impressions from our kick-off “interaction design workshop” at ZHdK can be found here: ZHdK Blog Robots for Life. In our field study with the first “Ranger” prototype, we investigated child-robot interaction in a tidying up scenario, depending on how active the robot behaved (proactive vs. reactive behavior). We were also interested in parent’s feedback on the robot’s design and the general approach.

After this first development-evaluation-circle, we are currently working on a refined version of the Ranger box with which we will conduct further human-robot interaction studies.

Collaborators: Florian Vaussard, Philippe Rétornaz, Alain Berthoud, Francesco Mondada, Florian Wille (ZHdK), Karmen Franinović (ZHdK), Julia Fink, Séverin Lemaignan, Pierre Dillenbourg

We thank all parents and children who participated in the field-study, as well as Ecole Vivalys for their collaboration.

During 2013, while working on a refined prototype of Ranger, we carried out a series of child-robot interaction studies with 30 young children (4-5 years old) interacting with Ranger. One of our main goals in this study was to investigate the effects of unexpected robot behavior on children’s engagement with the robot, and the perceived cognitive abilities of the robot. We created a playful scenario in which two children assemble dominos toghether with the robot. As the two children are in different parts of the room, Ranger is used to transport the dominos between them. Usually, when a child would call the robot to come over, it would just follow the request and approach the child. However, from time to time, we manipulated the robot’s behavior such that it would go somewhere else and eventually “get lost” in one corner of the room. This robot behavior was supposed to be unexpected for the children, and we assumed it might increase children’s engagement with the robot, and how far they perceive it as having “its own will” (and ascribe intentionality to it).

Collaborators: Julia Fink, Séverin Lemaignan, Pierre Dillenbourg, Florian Vaussard, Philippe Rétornaz, Francesco Mondada

We thank all parents and children who participated in the study, as well as Ecole Vivalys for their collaboration.

Publications and Presentations:

- J. Fink, S. Lemaignan, P. Dillenbourg, P. Rétornaz, F. Vaussard, A. Berthoud, F. Mondada, F. Wille, K. Franinović. Which Robot Behavior Can Motivate Children to Tidy up Their Toys? Design and Evaluation of “Ranger”. 9th ACM/IEEE International Conference on Human-Robot Interaction (HRI) 2014, Bielefeld, Germany, March 3-6, 2014 (link)

- J. Fink, F. Vaussard, P. Rétornaz, A. Berthoud, F. Wille, F. Mondada, and P. Dillenbourg. Motivating Children to Tidy up their Toys with a Robotic Box. Presentation and Poster at the 8th ACM/IEEE International Conference on Human-Robot Interaction (HRI) 2013, Pioneers Workshop, Tokyo, Japan, March 3, 2013 (link)

- J. Fink, F. Vaussard, P. Rétornaz, A. Berthoud, F. Mondada, and P. Dillenbourg. How Children Tidy up Their Room with “Ranger” the Robotic Box. Poster at the 2nd site-visit of the NCCR robotics, ETH Zürich, Zurich, Switzerland, October 24-26, 2012 (link)

ReflectWorld is an ecology of participation enhancement tools for meetings. Built around an iOS version of the Reflect Table, it is a cloud that enables real-time participation data sharing (Reflect All), real-time questionnaire and feedback collection (Reflect You), real-time meeting analysis (Reflect Meeting Analyzer) and has legacy support for the reflect table.

ReflectWorld: A Distributed Architecture for Meetings and Groups Evolution Analysis

2012. The 2012 International Conference on Collaboration Technologies and Systems, Denver, Colorado, USA, May 21-25, 2012. p. 389-396.Abstract

CatchBob! is an experimental platform in the form of a mobile game for running psychological experiments. It is designed to elicit collaborative behavior of people working together on a mobile activity. It was Nicolas Nova’s PhD research project that is now completed.

Running on a mobile device (iPAQ, TabletPc), it’s a collaborative hunt in which groups of three persons have to find and circle a virtual object on our campus.

The platform

We

set up a mobile game in which groups of 3 team-mates have to solve a

joint task. The aim of the game for the participants is to find a

virtual object on our campus and surround it with a triangle. They are

provided with a location-based tool running on an iPAQ or a Tablet PC.

This tool allows each person to see the location of his or her partners

with a colored dot on the campus map. Figure 1 shows a screenshot of the

location awareness tool. Another meaningful piece of information given

by this tool is whether the user is close or far from the object: a

proximity sensor. In addition, the tool also enables simple

communication: if a participant points on a dot (that represents a

person) with his/her stylus, (s)he can draw a vector that correspond to a

direction proposition for his/her partner: “go to this direction”.

Another communication feature is the possibility to write messages and

broadcast them to the partners.

As depicted on this architecture ,

the WiFi enabled clients (iPAQ or Table PC) determine their location

entirely privately without constant interaction with a central service.

They use the data provided by IEEE 802.11 network auditor such as

CraftDeamon and PlaceLab. Broadcast of position, command and stroke

messages is done over SOAP to a centralized server.

wiSim is an environment for distributed simulations. It allows groups of students to work and collaborate on a simulation using their mobile devices (PDAs or mobile phones).

An ordinary simulation executed on a computer is generally controlled

by a single user who enters the data (inputs) and gets the results

(outputs).

Instead of maintaining all in one place controls and

results, wiSim spreads these elements across a group of participants. In

this way users have to negotiate verbally the interaction onto the

simulation, thus striving for an optimal collaborative effort of their

learning experience.

Every participant can interact in wiSim using a mobile device. Initially, the user can choose a specific group to join or he/she can create his/her own for other to join. Afterwards, the group can start working on a simulation taken from a list available on the server. Subsequently, the server divides the inputs and outputs of the simulation among the group members. The following step requires the group to enter the parameters necessary to initialise the simulation, which is executed on the server. The results are then dispatched to the users’ mobile devices, allowing them to discuss the extra tweaking required to solve the assignment.

The simulation is created a priori, normally by a teacher. The author of the simulation decides the whereabouts of the interaction, namely how many participants, who is going to receive what, the working principle of the simulation and the format of the outputs.