JUSThink

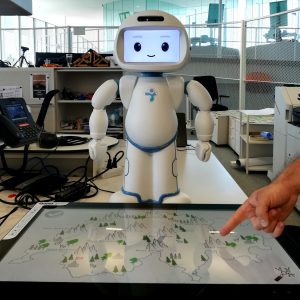

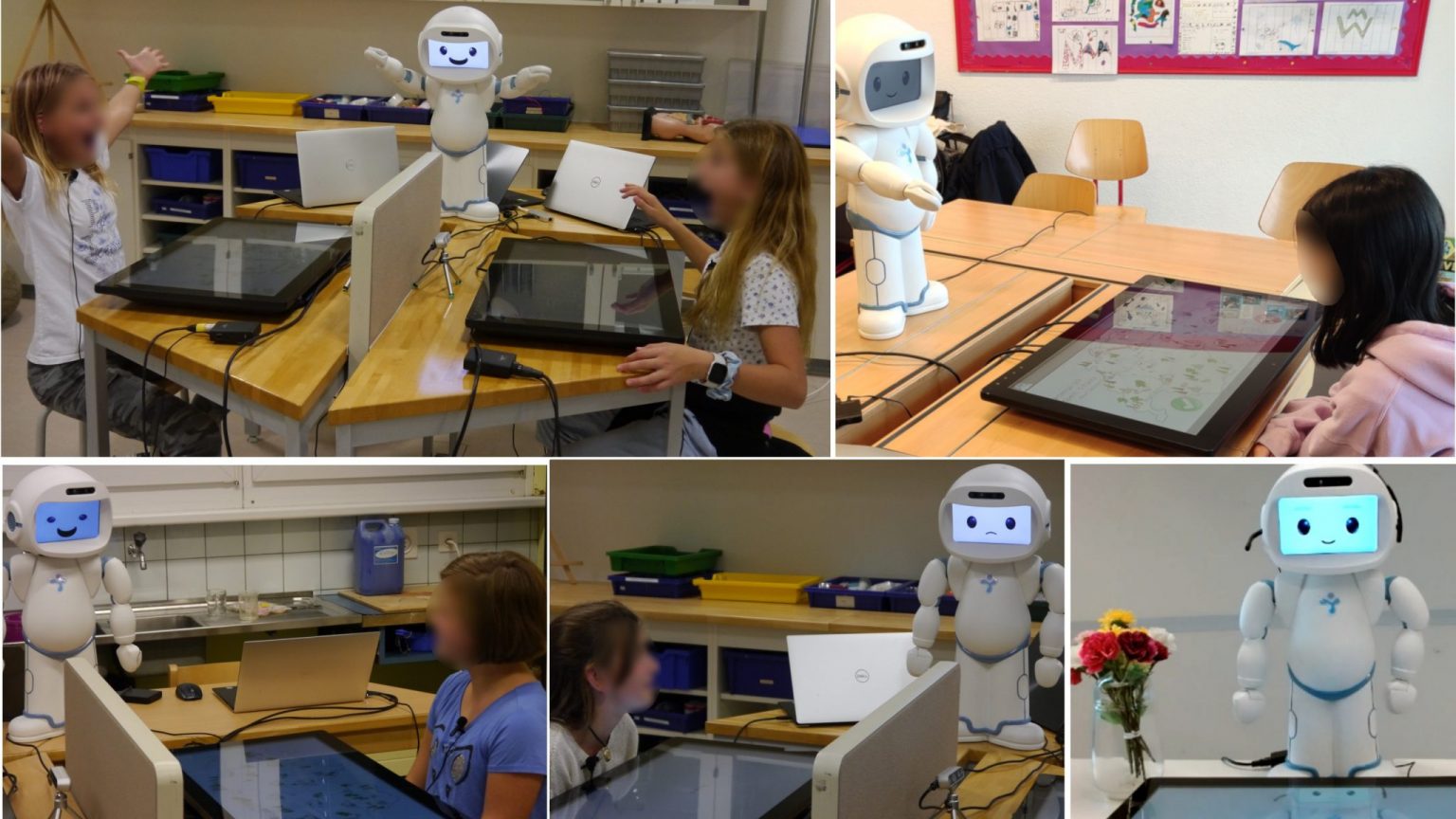

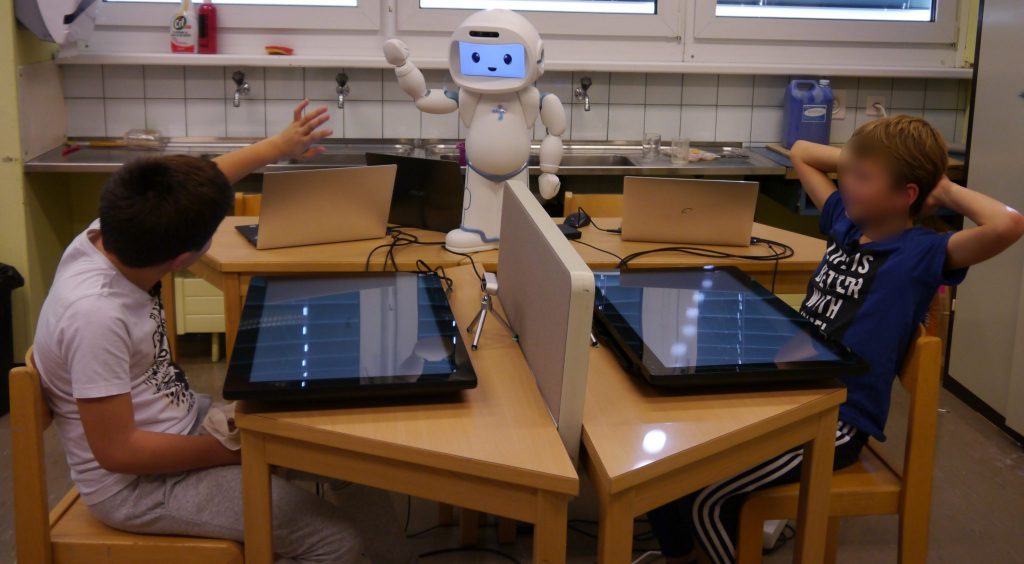

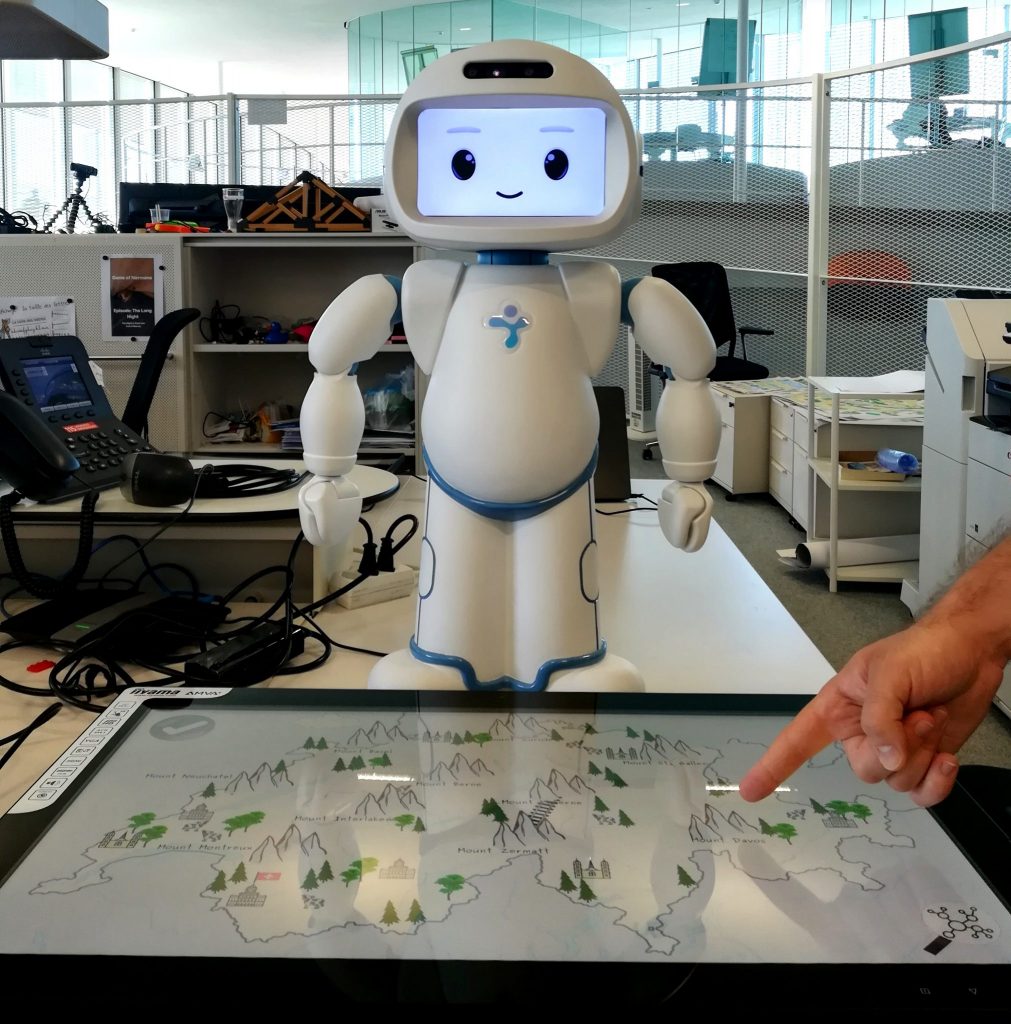

JUSThink project aims to improve the computational thinking skills of children, by exercising algorithmic reasoning with and through graphs, where graphs are posed as a way to represent, reason with and solve a problem. It targets at fostering children’s understanding of abstract graphs through a collaborative problem-solving task mediated by a humanoid robot (QTrobot).

Our goal is to build intelligent autonomous social robots that can promote children’s learning by assisting teachers through complementary activities; by equipping the robot with:

- the ability to understand engaging behaviors of students that are conducive to learning and provide suggestions accordingly: lead by Jauwairia Nasir

- mutual understanding skills so that the robot can “put itself in the child’s shoes”: lead by Utku Norman

This project has received funding from the European Union’s Horizon 2020 research and innovation programme under grant agreement No 765955 (the ANIMATAS Project).

- Jauwairia Nasir, Barbara Bruno, & Pierre Dillenbourg. (2021). PE-HRI-temporal: A Multimodal Temporal Dataset in a robot mediated Collaborative Educational Setting [Data set]. Zenodo. https://doi.org/10.5281/zenodo.5576058

- Nasir, Jauwairia, Norman, Utku, Bruno, Barbara, Chetouani, Mohamed, & Dillenbourg, Pierre. (2021). PE-HRI: A Multimodal Dataset for the study of Productive Engagement in a robot mediated Collaborative Educational Setting [Data set]. Zenodo. https://doi.org/10.5281/zenodo.4633092

- Norman, Utku, Dinkar, Tanvi, Nasir, Jauwairia, Bruno, Barbara, Clavel, Chloé, & Dillenbourg, Pierre. (2021). JUSThink Dialogue and Actions Corpus (v1.0.0) [Data set]. Zenodo. https://doi.org/10.5281/zenodo.4627104

- Python Package for JUSThink Human-Robot Activities. (2022). GitHub. Retrieved from https://github.com/utku-norman/justhink_world/

- ROS Wrappers for JUSThink Human-Robot Pedagogical Scenario. (2022). GitHub. Retrieved from https://github.com/utku-norman/justhink-ros

- JUSThink Pre-experiment Analysis in the IEEE RO-MAN 2022 Paper. (2022). GitHub. Retrieved from https://github.com/utku-norman/justhink-preexp-analysis

- Norman, Utku, Dinkar, Tanvi, Bruno, Barbara, & Clavel, Chloé. (2021). JUSThink Alignment Analysis in the Dialogue & Discourse Paper (v1.1.0). Zenodo. https://doi.org/10.5281/zenodo.4675070

Publications

2023

An HMM-based Real-time Intervention Methodology for a Social Robot Supporting Learning

2023-01-01. 32nd IEEE International Conference on Robot and Human Interactive Communication (RO-MAN), Busan, SOUTH KOREA, AUG 28-31, 2023. p. 2204-2211. DOI : 10.1109/RO-MAN57019.2023.10309430.Robots for Learning 7 (R4L): A Look from Stakeholders’ Perspective

2023-01-01. 18th Annual ACM/IEEE International Conference on Human-Robot Interaction (HRI), Stockholm, SWEDEN, Mar 13-16, 2023. p. 935-937. DOI : 10.1145/3568294.3579958.Computational Models of Mutual Understanding for Human-Robot Collaborative Learning

Lausanne, EPFL, 2023.2022

Many are the ways to learn: Identifying multi-modal behavioral profiles of collaborative learning in constructivist activities (vol 16, pg 485, 2021)

International Journal Of Computer-Supported Collaborative Learning. 2022-05-12. DOI : 10.1007/s11412-022-09368-8.A Case for the Design of Attention and Gesture Systems for Social Robots

2022-01-01. 14th International Conference on Social Robotics (ICSR) – Social Robots for Assisted Living and Healthcare, Emphasizing on the Increasing Importance of Social Robotics in Human Daily Living and Society, Florence, ITALY, Dec 13-16, 2022. p. 367-377. DOI : 10.1007/978-3-031-24667-8_33.Introducing Productive Engagement for Social Robots Supporting Learning

Lausanne, EPFL, 2022.Personalized Productive Engagement Recognition in Robot-Mediated Collaborative Learning

2022. 24th ACM International Conference on Multimodal Interaction (ICMI), Bangalore, India, November 7-11, 2022. p. 632-641.Temporal Pathways to Learning: How Learning Emerges in an Open-ended Collaborative Activity

Computers & Education: Artificial Intelligence. 2022. Vol. 3, p. 100093. DOI : 10.1016/j.caeai.2022.100093.Efficacy of a ‘Misconceiving’ Robot to Improve Computational Thinking in a Collaborative Problem Solving Activity: A Pilot Study

2022. 31st IEEE International Conference on Robot & Human Interactive Communication (RO-MAN), Naples, Italy, August 29 – September 2, 2022. DOI : 10.1109/RO-MAN53752.2022.9900775.Studying Alignment in a Collaborative Learning Activity via Automatic Methods: The Link Between What We Say and Do

Dialogue & Discourse. 2022-08-06. Vol. 13, num. 2, p. 1-48. DOI : 10.5210/dad.2022.201.What if Social Robots look for Productive Engagement?

International Journal of Social Robotics. 2022. Vol. 14, p. 55–71. DOI : 10.1007/s12369-021-00766-w.2021

Mutual Modelling Ability for a Humanoid Robot: How can it improve my learning as we solve a problem together?

2021-03-12. Robots for Learning Workshop in 16th annual IEEE/ACM Conference on Human-Robot Interaction (HRI 2021), Virtual Conference, March 9-11, 2021.Many Are The Ways to Learn: Identifying multi-modal behavioral profiles of collaborative learning in constructivist activities

International Journal of Computer-Supported Collaborative Learning. 2021. Vol. 16, p. 485–523. DOI : 10.1007/s11412-021-09358-2.A Social Robot That Looks For Productive Engagement

2021. Robots for Learning workshop at 16th annual ACM/IEEE International Conference on Human-Robot Interaction, Online conference, March 9-11, 2021.2020

When Positive Perception of the Robot Has No Effect on Learning

2020-08-31. 29th IEEE International Conference on Robot and Human Interactive Communication (IEEE RO-MAN), Virtual Conference, Aug 31 – Sept 4, 2020. p. 313-320. DOI : 10.1109/RO-MAN47096.2020.9223343.Is There ‘ONE way’ of Learning? A Data-driven Approach

2020. 22nd ACM International Conference on Multimodal Interaction, Virtual event, Netherlands, October 25-29, 2020. p. 388–391. DOI : 10.1145/3395035.3425200.You Tell, I Do, and We Swap until we Connect All the Gold Mines!

ERCIM News. 2020-01-01. Vol. 2020, num. 120, p. 22-23.