For an updated list of semester projects, please follow this link.

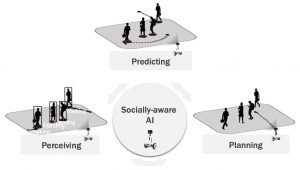

Our VITA laboratory is interested in democratizing robots that will co-exist with humans in a safe, efficient, trustworthy, and socially-aware way. Self-driving cars, delivery robots, or social robots are examples of such robots. To realize this future, we propose empowering robots with a type of cognition we call socially-aware AI, i.e., robots that can not only perceive human behavior but reason with social intelligence – the ability to make safe and consistent decisions in unconstrained crowded social scenes.

Our research tackles the 3 Pillars of a socially-aware AI system tailored for transportation applications:

Perceiving, Predicting, and Planning.

Ongoing projects

Open-Ended Video Generation

We propose Stable Video Infinity that is able to generate ANY-length videos with high temporal consistency, plausible scene transitions, and controllable streaming storylines in ANY domains.

Ground-to-Aerial Cross-View Localization

We propose a fine-grained cross-view localization method that localizes a ground-level image on publicly available aerial imagery by matching local feature between them.

End-to-End Planning

We propose a lightweight 3D rasterization for scalable novel-view synthesis, and bridges sim-to-real with feature-space alignment.

3D Semantic Occupancy Prediction

Reconstruct the 3D geometry and semantics of the surrounding environment with voxel grids from the camera or LiDAR input.

World Model for Controllable Generation

We propose a controllable world model that can generate future frame while controlling ego motion, scene object motion and human motion.

Multimodal Human Pose Forecasting

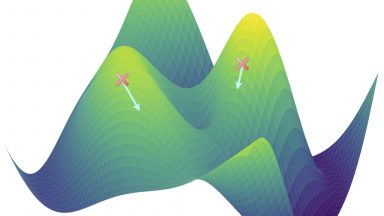

Introducing MotionMap, a heatmap-based approach for multimodal human pose forecasting that provides uncertainty estimation, controllability, and confidence measures while effectively capturing diverse and rare motion predictions.

HEADS-UP: Head-Mounted Egocentric Dataset for Trajectory Prediction in Blind Assistance Systems

HEADS-UP is the first egocentric dataset collected from head-mounted cameras, designed specifically for trajectory prediction in blind assistance systems.

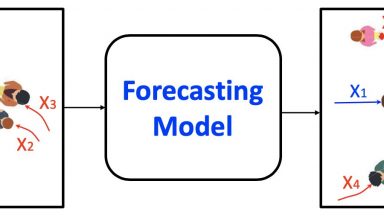

Vehicle Trajectory Prediction

We propose “generalizable” and “accurate” methods to predict vehicle trajectories for autonomous vehicles.

Robust Action Representation Learning

We propose a simple method to improve the robustness of transformer-based skeletal action recognition models against skeleton perturbations.

Heteroscedastic Regression

We address the problem of sub-optimal covariance estimation in deep heteroscedastic regression by proposing a new model and metric.

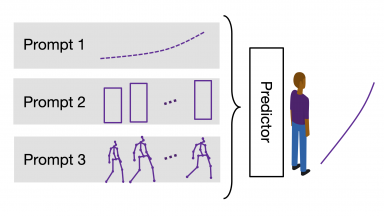

Social-Transmotion

We translate the idea of a prompt from Natural Language Processing (NLP) to the task of human trajectory prediction, where a prompt can be a sequence of x-y coordinates on the ground, bounding boxes or body poses.

Human Pose Forecasting

First, we develop an open-source library for human pose forecasting. Next, we model the uncertainty in the task, and then propose a denoising diffusion model to handle noisy inputs.

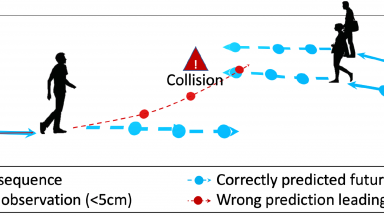

Robust Trajectory Prediction

How robust are the trajectory prediction models? We study this question from different perspectives on vehicle and pedestrian trajectory prediction models.

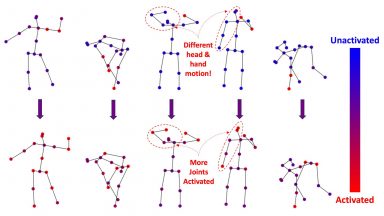

WholeBody Human Pose Estimation: Keypoint Communities

We propose a principled way to quantify the independence of semantic keypoints. Through training a pose estimation algorithm with a weigthed objective, we are able to predict fine-grained human and car poses.

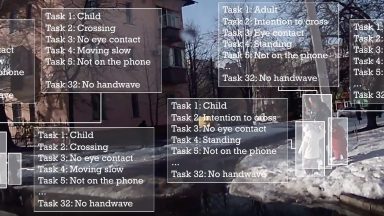

Detecting 32 Pedestrian Attributes for Autonomous Vehicles

Joint pedestrian detection and attribute recognition with fields and Multi-Task Learning.

Social-NCE

Contrastive learning with negative data augmentation for robust motion representations.

2D Perception: Human Pose Estimation

Multi-person human pose estimation that is particularly well suited for urban mobility such as self-driving cars and delivery robots.

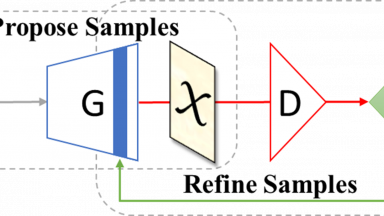

Collaborative Sampling in GANs

We propose a collaborative sampling scheme between the generator and discriminator for improved data generation. Guided by the discriminator, our approach refines generated samples through gradient-based optimization in the data (or feature / latent) space, shifting the generator distribution closer to the real data distribution.

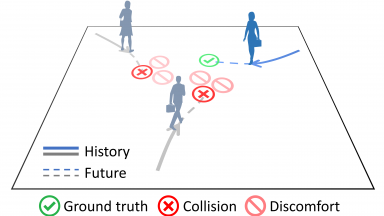

Planning: Crowd-Robot Interaction

Mobility in an effective and socially-compliant manner is an essential yet challenging task for robots operating in crowded spaces. In this project, we want to go beyond first-order Human-Robot interaction and more explicitly model Crowd-Robot Interaction (CRI). More details here.

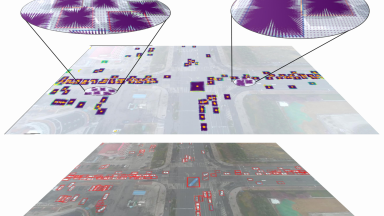

2D Perception: Traffic Perception

Adapting fields for detection from aerial images

3D Human Detection

We have been exploring how to detect humans in the 3D space only using cameras, which are cheap, reliable and ubiquitous. Our major applications are autonomous vehicles and delivery robots. We focused on challenging cases (the long tail) and uncertainty estimation to improve the reliability of autonomous systems.

Socially-Aware Human Trajectory Forecasting

We present an in-depth analysis of existing deep learning based methods for modelling social interactions in crowds. To objectively compare the performance of these interaction-based forecasting models, we develop a large scale interaction-centric benchmark TrajNet++, a significant yet missing component in the field of human trajectory forecasting.

Discrete Choice Models and Neural Networks

We introduce a new framework which integrates representation learning in discrete choice models (DCM). We preserve the model’s high interpretability obtained by DCM’s strong theoretical foundation, while augmenting the model’s predictive performances through data-driven methods such as Neural Networks.

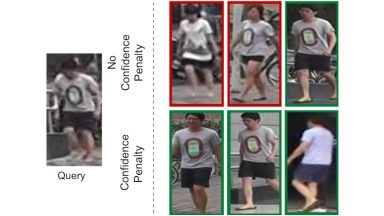

2D Perception: Visual Re-Identification

Improved visual re-identification which deals with the uncertainty of a model.

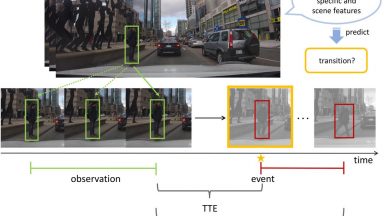

Pedestrian Stop and Go

Predicting whether pedestrians will stop walking (Stop) or start to walk (Go) in the near future, for better trajectory prediction around road traffic.

Skeleton-based Action Recognition

Recognizing the actions performed by humans from their skeletons.

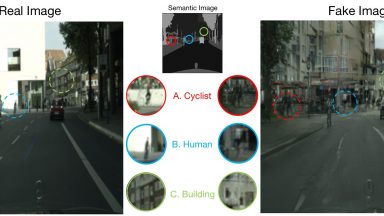

Semantically-aware Discriminators

We build on successful cGAN models to propose a new semantically-aware discriminator that better guides the generator. We aim to learn a shared latent representation that encodes enough information to jointly do semantic segmentation, content reconstruction, along with a coarse-to-fine grained adversarial reasoning.

Pedestrian Bounding Box Prediction

A libary for predicting 2D and 3D bounding boxes of humans in autonomous driving scenarios