While Physics-Based Simulation (PBS) can highly accurately drape a 3D garment model on a 3D body, it remains too costly for real-time applications, such as virtual try-on. By contrast, inference in a deep network, requiring a single forward pass, is much faster.

In this collaborative project with Fision AG, we leverage this property and we propose a novel architecture to fit a 3D garment template to a 3D body. Specifically, we build upon the recent progress in 3D point-cloud processing with deep networks to extract garment features at varying levels of detail, including point-wise, patch-wise and global features. We fuse these features with those extracted in parallel from the 3D body, so as to model the cloth-body interactions. The resulting two-stream architecture, which we call as GarNet, is trained using a loss function inspired by physics-based modeling, and delivers visually plausible garment shapes whose 3D points are, on average, less than 1 cm away from those of a PBS method, while running 100 times faster. Moreover, the proposed method can model various garment types with different cutting patterns when parameters of those patterns are given as input to the network.

Method

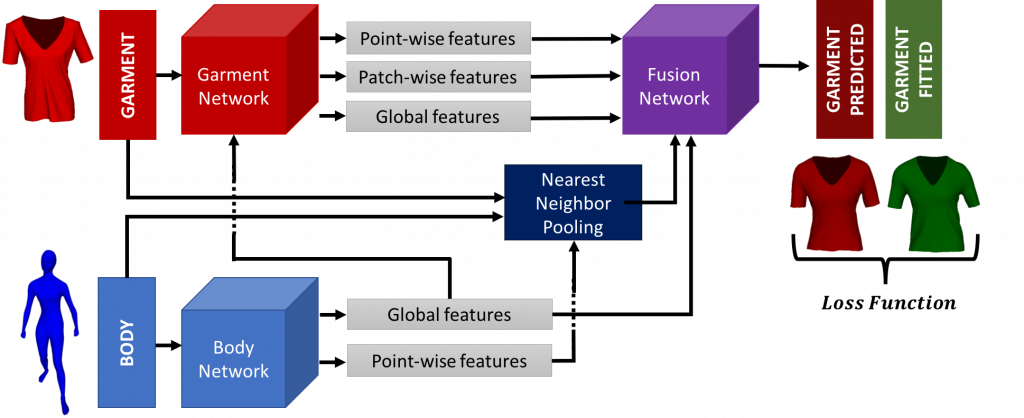

Our model takes the target body and the template garment, as input. It consists of a Garment stream and a Body stream. The Body one uses an architecture similar to PointNet to extract local and global body features. The Garment stream exploits the global body features to compute point-wise, patch-wise and global body features for the template garment mesh. These features, along with the global body ones, are then fed to a fusion subnetwork to predict the shape of the fitted garment. To further increase the fitting accuracy, we introduce an auxiliary stream that computes separate local body features for each garment vertex.

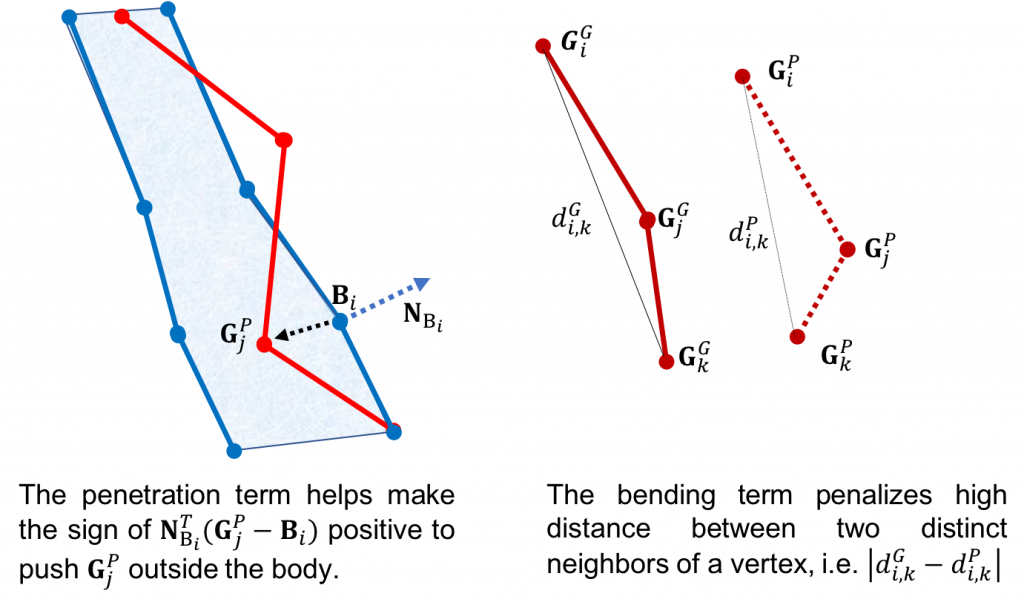

We incorporate appropriate loss terms for physical constraints (e.g. skin-cloth penetration, bending) in the objective function we minimize during training. Hence, at test time, we avoid the need for extra post-processing steps that PBS tools might require.

Result videos

Prediction Results

The following videos show our results and the ground truth side-by-side.

T-Shirt

Jeans

Sweater

Architecture Comparison

The following video shows the GarNet-Naive, GarNet-Local, GarNet-Global and Ground Truth side-by-side.

Ablation Study

The following video depicts our results when turning off the penetration loss.

Links

(In order to reduce the size of the compressed file, only the necessary files and results have been included. In order to re-generate the data as used in the paper please read the README.txt file inside the code folder. After re-generating the data, it could take 500 GB or more of space.)

Citation

Please cite:

@inproceedings{gundogdu19garnet,

title = {Garnet: A Two-stream Network for Fast and Accurate 3D Cloth Draping},

author = {Gundogdu, Erhan and Constantin, Victor and Seifoddini, Amrollah and Dang, Minh and Salzmann, Mathieu and Fua, Pascal},

booktitle = {{IEEE} International Conference on Computer Vision ({ICCV})},

month = {oct},

organization = {{IEEE}},

year = {2019},

}