Description

Different visual tasks are often strongly and obviously correlated. For instance, having surface normals simplifies estimating the depth of an image, knowing segmentation could help detect objects, etc. Our intuition implies the existence of a special structure among visual tasks. Extracting this structure would allow us to seamlessly reuse supervision among related tasks or solve many tasks in one system without piling up the complexity.

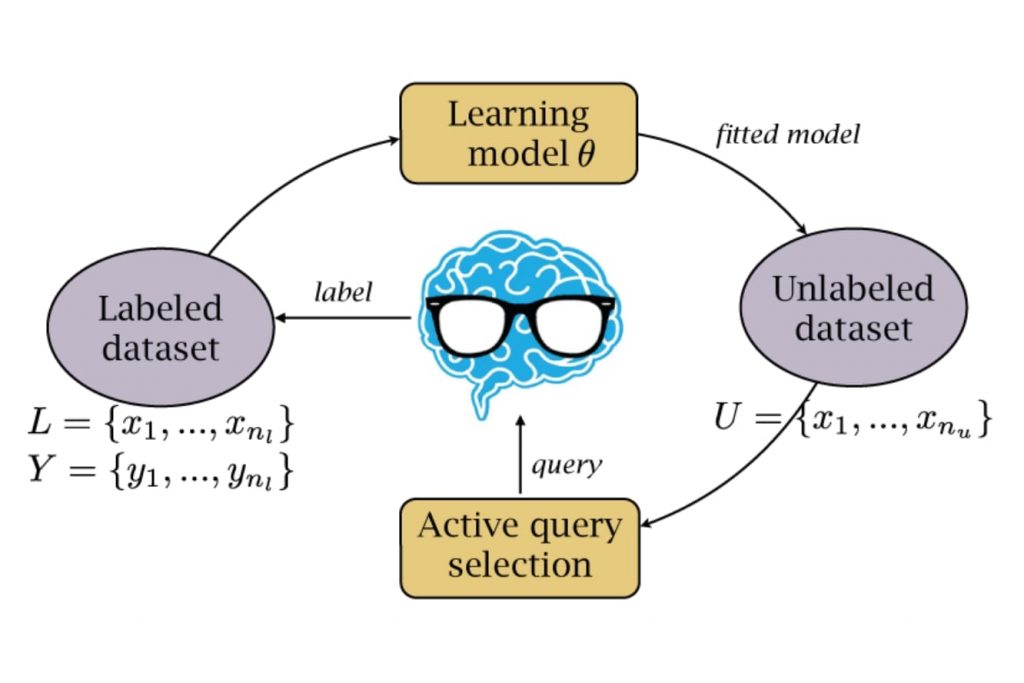

On the other hand, Active Learning (AL) approaches are well-known to be an effective way to alleviate labeling burdens. At the same time, one of the key ingredients of AL methods is uncertainty estimation. We argue that combining both multi-task relations in visual domains and uncertainty estimation techniques would allow us to converge better and faster than would common AL methods.

Prerequisites

The candidate should have programming experience in Python.

Previous experience with machine learning and computer vision is +, experience with PyTorch is required.

Main requirements are curiosity to learn new and willingness to overcome difficulties.

Contact

If you are interested in this project, please send an email to Nikita Durasov.

References

[1] “Taskonomy: Disentangling Task Transfer Learning”, Zamir et al., 2018

[2] “Robustness via Cross-Domain Ensembles”, Yeo et al., 2021

[3] “Robust Learning Through Cross-Task Consistency”, Zamir et al., 2020

[4] “Simple and Scalable Predictive Uncertainty Estimation using Deep Ensembles”, Lakshminarayanan et al., 2017

[5] “Dropout as a Bayesian Approximation: Representing Model Uncertainty in Deep Learning”, Gal et al., 2016

[6] “Masksembles for Uncertainty Estimation”, Durasov et al., 2021