An eye-tracking experiment is conduced in an immersive virtual reality environment with 6 degrees of freedom, using a head mounted display [1]. The users interact with 3D point cloud models following a task-dependent protocol, while recording their gaze and head trajectories.

In this webpage, we make publicly available a dataset consisting of the tracked behavioural data, post-processing results, saliency maps in form of importance weights, re-distribution of a sub-set of contents and scripts to generate the exact versions of the point clouds that were used in the study, and usage examples.

Virtual reality scene

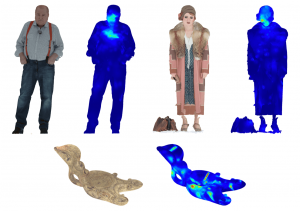

Saliency maps for sample models used in the experiment

*Credits to the original creators, license attribution, associated copyrights, and modifications are indicated for each re-distributed model in a README file coming with the dataset. For the models that are not re-distributed, source links and scripts are provided for reproducibility reasons.

Download

The ViAtPCVR dataset can be downloaded from the following FTP by using dedicated FTP clients, such as FileZilla or FireFTP (we recommend to use FileZilla):

Protocol: FTP

Host (FTP server): tremplin.epfl.ch

Username: [email protected]

Password: ohsh9jah4T

FTP port: 21

After you connect, choose the ViAtPCVR folder from the remote site, and download the relevant material.

The total size of the dataset is ~700 MB.

Please instruct the README files for further information on the structure and the usage of the material.

You may also read [1] for more details about the experiment, and the methodologies that were defined and used.

Conditions of use

If you wish to use any of the provided material in your research, we kindly ask you to cite [1].

References

- E. Alexiou, P. Xu and T. Ebrahimi, “Towards Modelling of Visual Saliency in Point Clouds for Immersive Applications,” 2019 IEEE International Conference on Image Processing (ICIP), Taipei, Taiwan, 2019, pp. 4325-4329. doi: 10.1109/ICIP.2019.8803479