Facial Analysis Methods and Applications

We currently have 5 PhD students and one post-doc assistant working on projects related to face detection, tracking and analysis. You can find below some of the projects that we are currently working on, in collaboration with various industrial and research partners. Please feel free to contact the related person if anything is of interest or you need more information.

There is also an LTS5 spin-off company, nViso, co-founded by a former member specializing on facial analysis and applications.

For students: If you are interested in doing a semester or master project with us on a related topic, please check the “Student Projects” section of the group. Or you can write to one of the related people ([email protected]) directly, to see if we have anything not listed to propose.

On Going Projects

Difficult Intubation Assessment from Video

People involved: Anil Yuce, Gabriel Cuendet

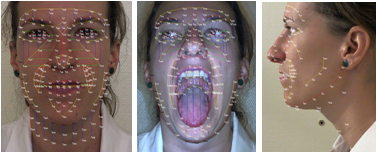

Computer vision techniques, and more specifically facial analysis and modelling, are applied to HD images and videos of patients. Those recordings are obtained during the regular pre-operative consultation of those patients, prior to their elective surgery, using two HD webcams and a Microsoft Kinect. In total 2839 patients have participated to the project.

The face models developed at the lab and in collaboration with nViso (www.nviso.ch) are used for the extraction of morphological features from the face which allow to classify patients according to their difficulty of intubation.

Eye Tracking and Gaze Estimation from 2D Sensors

People involved: Nuri Murat Arar

- Gaze-controlled computer functionalities (mouse cursor positioning, page scrolling, map navigation)

- PC and gaming research

- Medical research, disabled aids (gaze-based typing), diagnosing diseases (e.g., ADHD, ASD, OHD)

- Market research

- Usability research and testing

- Human attention and cognitive state analysis

Dynamic Facial Expression Analysis for Emotion Recognition

People involved: Anil Yüce

In this work we aim to build an automatic system that is able to detect the facial action units (AU), their intensities and dynamic properties (like phase information). We try to achieve high AU recognition accuracy by using transitions of various shape and texture features between neutral and expressive faces. The detected action units not only allow us to infer the emotional state of the subject but also can point out certain anomalies in expressivity.

We work in collaboration with the NCCR in Affective Sciences and the E3Lab in UniGe to record subjects during certain emotion evoking stimuli and try to find out the differences between control subjects and subjects suffering a psychopathology. We also work on finding representative visual cues and temporal features from the facial expressions for the component process model of emotion, which is more complex than the basic model of emotion and the processes included in it will help us recover more information both on the emotional state of the subject and also on the type of stimulus that caused it.

This work is part of the Swiss National Center of Competence in Research on Interactive Multimodal Information Management (IM2) .

Audiovisual Speech Recognition in Cars

People involved: Marina Zimmermann

This project aims to demonstrate that visual information can significantly improve speech recognition in a realistic automotive context.

For human-machine interfaces, the face is central. It is recognized as a leading natural conveyor of information, as well as gestures or voice. The purpose of this project is to demonstrate that the video images of the face of a driver can significantly improve the performance of automatic speech recognition systems inside the car.

Our previous research work have shown that visual information improves performance of automatic speech recognition when the audio channel is noisy, which is common in a car (engine noise, wind noise, exterior or interior noise, etc.). This project implements these achievements in a car. Using a vehicle provided by the French car manufacturer, researchers will create an audiovisual database to test and quantify the performance of audio and audiovisual speech recognition. These data will be incorporated into tools for face detection and tracking available through the partnership between PSA Peugeot Citroën and LTS5.

This project lasts ten months and is sponsored by PSA Peugeot Citroën. It is part of a long-term collaborative research agenda.

Emotion Detector to Improve Driving Safety

People involved: Hua Gao, Anil Yüce

This project aims to develop a non-intrusive driver monitoring system which detects emotion state of the driver.

Monitoring the emotional status of the driver is critical for the safety and comfort of driving. In this project a real-time non-intrusive monitoring system is developed, which detects the emotional states of the driver by analyzing facial expressions. The system considers two basic distressed emotions, anger and disgust, as stress related emotions.

We detect an individual emotion in each video frame and the decision on the stress level is made on sequence level. Experimental results show that the developed system operates very well on simulated data even with generic models. An additional adaptation step may improve the current system further.

This project lasts one year and is sponsored by PSA Peugeot Citroën and is part of a long-term collaborative research agenda.

Embed of video is only possible from Mediaspace, Vimeo or Youtube

Driver’s State Monitoring by Face Analysis

People involved: Hua Gao, Anil Yüce

This project aims to define and to detect the relevant emotional states in order to estimate the physiological state of the driver.

During the last decade, we have developed a strong expertise on face analysis. This new project aims to estimate the emotional state of the driver through analysis of facial expressions. The first step will consist on providing a definition of the emotions and relevant states for the driver-car interface. The second stage will be the development of a real time emotion detector system. The last part will be the specific detection of the driver’s attention and distraction based on facial information.

This project will last 24 months and it is sponsored by PSA Peugeot Citroën and Valeo and is part of a long-term collaborative research agenda.

Past Projects

A Fatigue Detector to Keep Eyes on The Road

People involved: Marina Zimmermann

This project is sponsored by PSA Peugeot Citroën as part of a long-term collaborative research agenda. The project aims to develop a computer vision algorithm able to estimate the fatigue level of a driver based on the degree of eyelid closure.

In the context of intelligent cars assisting the driver, fatigue detection plays an important role. Estimates state that up to 30% of car crashes in highways may be related to driver sleepiness. Therefore, a system able to detect drowsiness and to warn the driver could help to reduce accidents significantly.

An intuitive way to measure fatigue involves the use of a camera and the detection of fatigue-related symptoms. The PERCLOS measure which represents the percentage of time the eye is closed within a certain time window shows a significant correlation to drowsiness and is relatively easy to compute.

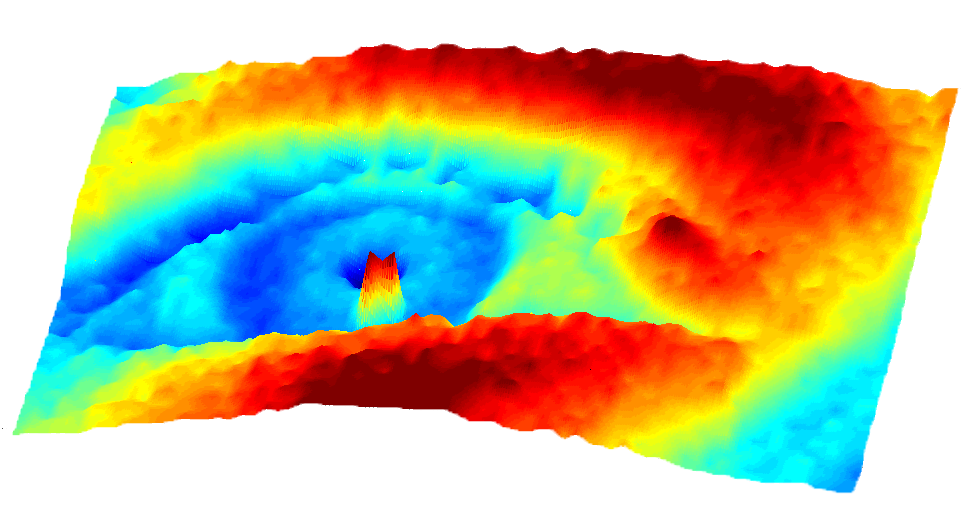

This project focuses on the detection of the eye closure with the aim to subsequently obtain the PERCLOS as a rate of fatigue. The research develops an eye-analyzing module by creating an algorithm able to disregard possible light effects as well as the drivers’ different eye morphologies. A 3D profiling of the eye and eyelids is then established so as to distinguish an open eye from a closed one. Lastly, the methodology is optimized to make it possible for it to run in on-board vehicle computers with limited computing power in real-time conditions.