Objective: Development of a real-time eye tracking system and methodology that provides a high estimation accuracy (~1° visual angle error) and high tolerance to unconstrained conditions, e.g., large head pose and body movements, changing illumination conditions, use of eyewear (glasses), and between-subject eye type variations, by requiring minimal user effort and by using a non-intrusive and flexible hardware setup.

Potential application scenarios for the system are the following:

- Diagnostics

- Human behaviour research (e.g. individual’s emotional state, attention, fatigue, and arousal detection, inter-personal interactions)

- Cognitive science (e.g. cognitive state and development analysis, decision making process analysis)

- Medical research, particularly in neuroscience (e.g. aid for the diagnosis of certain disorders (autism in children, attention deficit hyperactivity disorder (ADHD), fetal alcohol spectrum disorder (FASD), Parkinson’s disease, schizophrenia, consciousness disorders)

- Market research

- Usability research and testing

- Human-computer interactions

- Gaze-based controlling (e.g. assistive input to traditional computer mouse, scrolling, zooming, navigation, and typing)

- Assistive technology for people with rehabilitative disabilities (e.g. paralysis, spinal cord injury, repetitive strain injury, severe carpal tunnel, etc.) and motor disabilities (e.g. amyotrophic lateral sclerosis (ALS), cerebral palsy, etc.) by enabling gaze-based typing and controlling the interfaces

- PC and gaming research

- Augmented reality

- Virtual reality

Project Overview

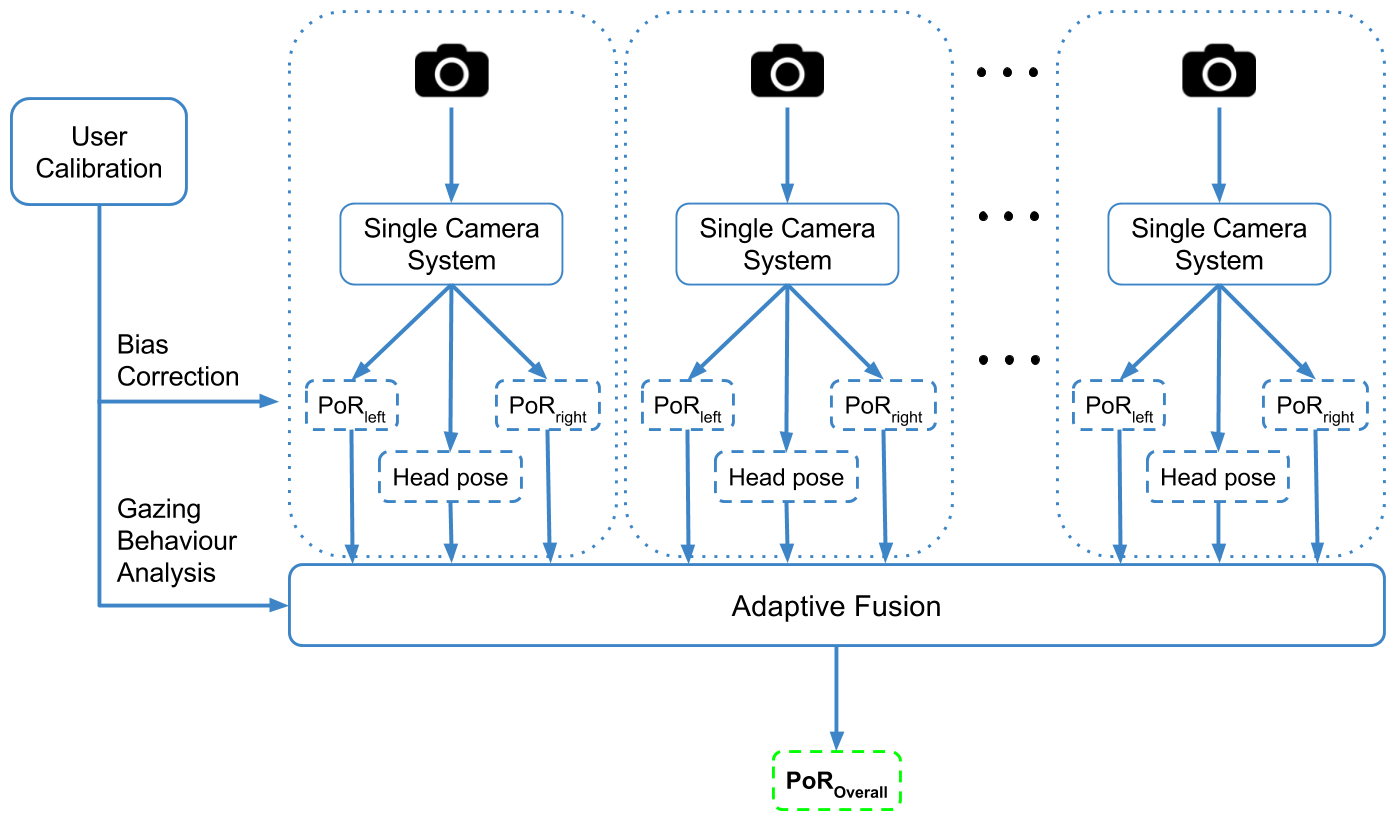

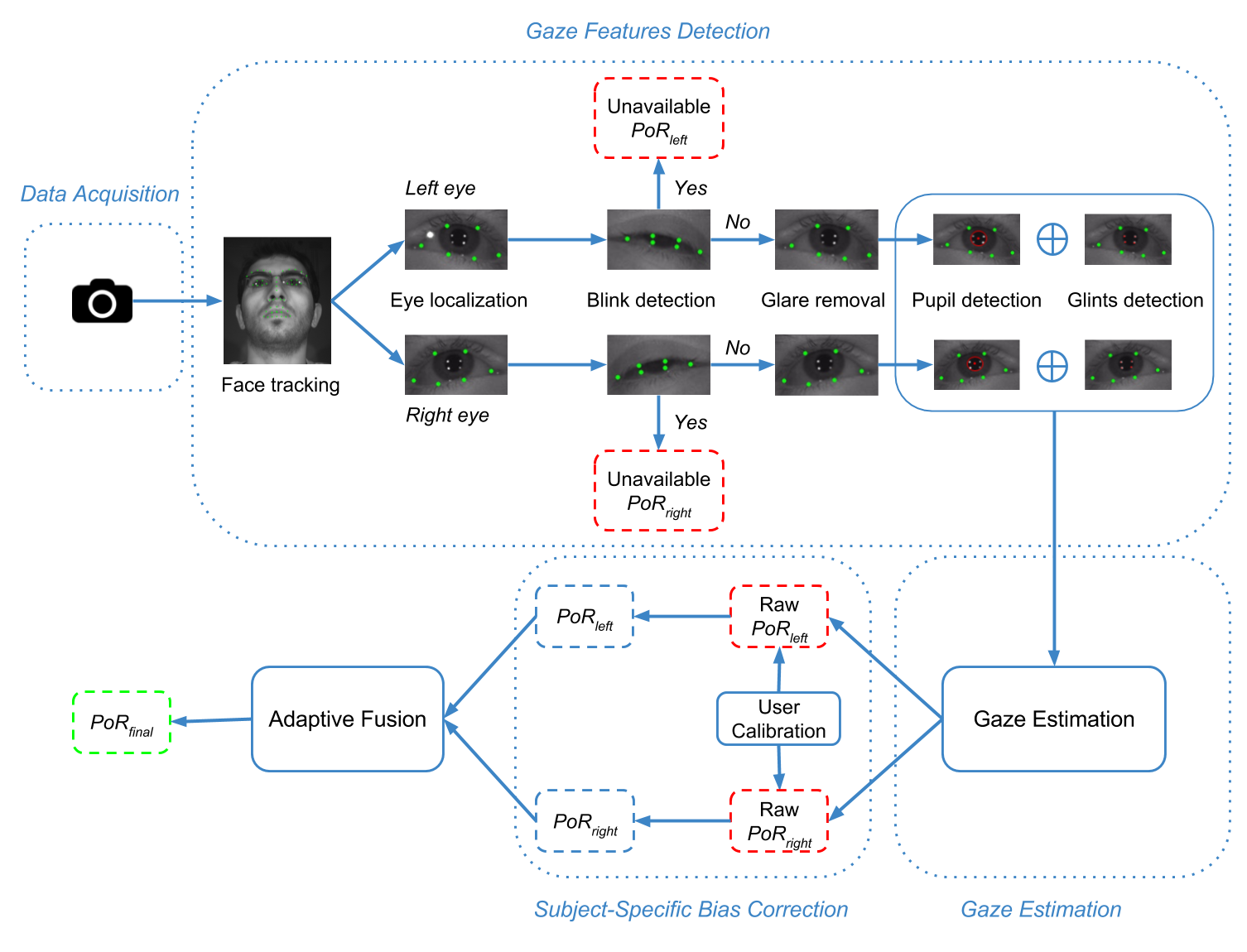

A novel real-time multi-camera and multi-view eye tracking framework is designed and implemented in order to address some of the major concerns in existing eye tracking systems. The proposed framework consists of a non-intrusive, flexible, and adaptable hardware setup. Differently from the conventional single view tracking setups, the proposed setup leverages multiple eye appearances simultaneously acquired from various views. It accurately operates in real-time with low-resolution data and allows for a large working volume, owing to multiple cameras’ combined large field-of-view. For more details regarding the hardware setup, developed algorithms and evaluations, please check our publications listed below.

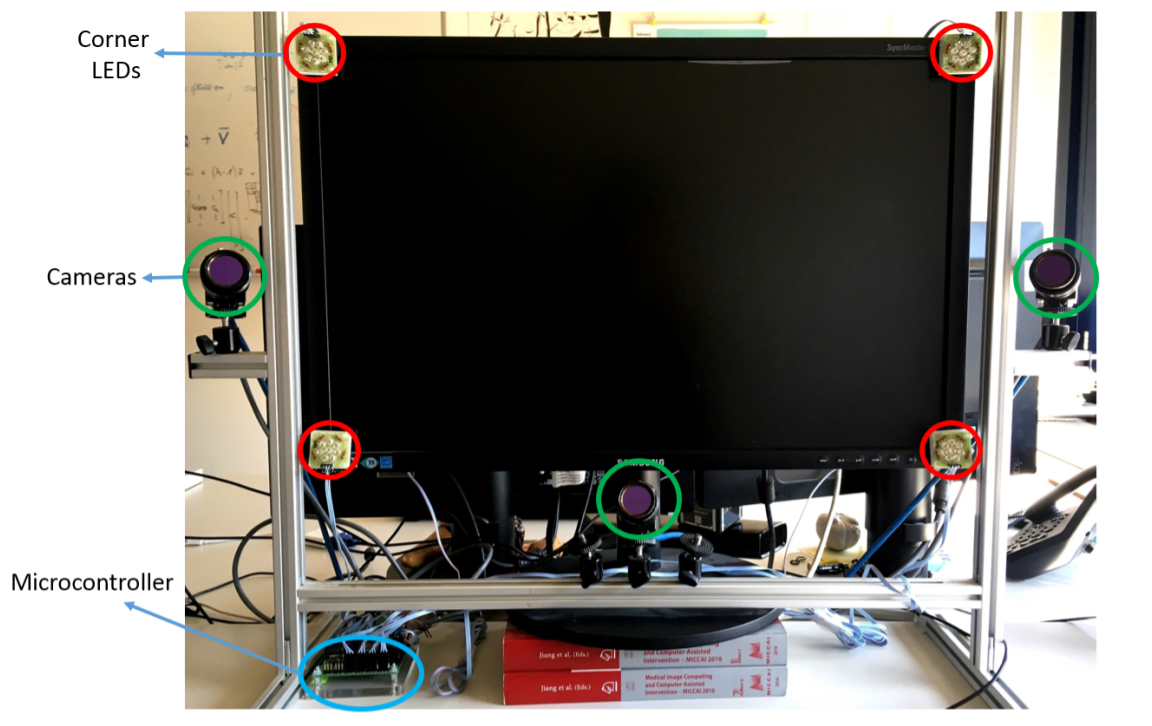

Prototype multi-view hardware setup with three cameras

Multi-camera framework overview

Patents

- EYE GAZE TRACKING SYSTEM AND METHOD , N. M. Arar, J.-P. Thiran and O. Theytaz. US20160004303, 2016.

Journals

- (new) Robust Real-Time Multi-View Eye Tracking , N.M. Arar and J.-P. Thiran, 2017 (under review).

- A Regression based User Calibration Framework for Real-time Gaze Estimation , N.M. Arar, H. Gao and J.-P. Thiran, IEEE Transactions on Circuits and Systems for Video Technology, 2016.

Conference Proceedings

- Estimating Fusion Weights of a Multi-Camera Eye Tracking System by Leveraging User Calibration Data , N.M. Arar and J.-P. Thiran, ACM Symposium on Eye Tracking Research & Applications (ETRA’16), Charleston, SC, USA, 2016.

- Robust Gaze Estimation Based on Adaptive Fusion of Multiple Cameras , N.M. Arar, H. Gao, and J.-P. Thiran, 11th IEEE International Conference on Automatic Face and Gesture Recognition (FG’15), Slovenia, May 4-8, 2015.

- Towards Convenient Calibration for Cross-Ratio based Gaze Estimation , N.M. Arar, H. Gao, and J.-P. Thiran, IEEE Winter Conference on Applications of Computer Vision (WACV’15), Waikoloa Beach, Hawaii, USA, January 6-9, 2015.

Thesis

(new) Robust Eye Tracking Based on Adaptive Fusion of Multiple Cameras , N.M. Arar, PhD Thesis, École polytechnique fédérale de Lausanne (EPFL), Thesis n° 7933 (2017).

Principal Researcher: Nuri Murat Arar (murat.arar[at]epfl.ch, muratnarar[at]gmail.com)