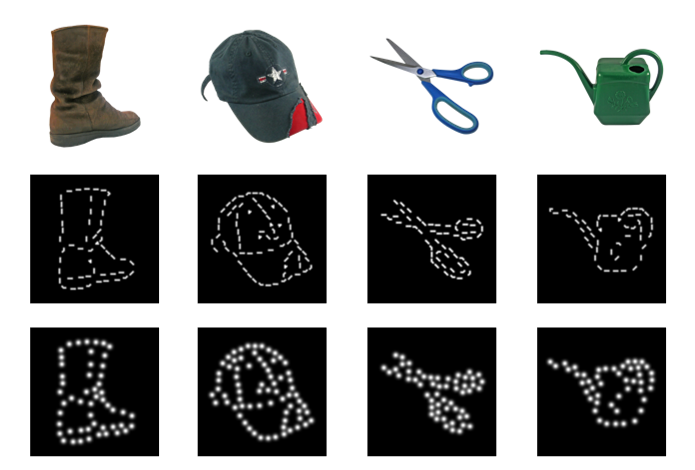

Visual prostheses offer a promising approach to restoring limited vision and improving the quality of life of blind individuals. These systems typically rely on a camera mounted on glasses to capture visual scenes, which are then processed and converted into patterns of electrical stimulation delivered by electrodes implanted in the retina, thalamus, or visual cortex. Electrical stimulation at these sites elicits discrete percepts of light, known as phosphenes, which together form the basis of visual experience under prosthetic vision. A central challenge in visual prosthetics is determining how to encode complex visual scenes within the severe constraints imposed by stimulation.

The goal of this project is twofold. First, we aim to identify the minimal visual information required for reliable object recognition under prosthetic vision. Second, we seek to determine which visual features are most critical for recognition, so that stimulation can be targeted to informative regions while avoiding unnecessary phosphene clutter. While the primary focus is on representations relevant to early stages of visual processing, we also consider how insights from higher-order visual areas, such as area V4, may inform feature selection. To this end, we combine psychophysical experiments, computational modeling and fMRI to present objects in simulated prosthetic vision at varying electrode densities to sighted participants. With this line of research, we aim to provide a proof of principle for optimizing signal processing in visual prostheses under strict sparsity constraints.

Publications

- Scialom E, Ernst U, Rotermund D, Herzog MH (2026). Object recognition from sparse simulated phosphenes and curved segments. Vision Research, 238, p108718.

- Loued-Khenissi L, Scialom E, Lutti A, Herzog MH, Draganski B (2025, preprint). Mid-level visual features engage V4 to support sparse object recognition under uncertainty. bioRxiv, doi:10.1101/2025.10.28.68503313.