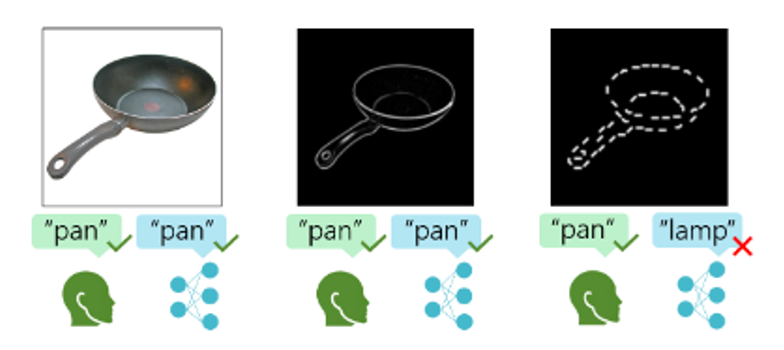

A central goal of this research is to understand how coherent object representations emerge from incomplete and ambiguous visual input, and why current deep neural networks (DNNs) often diverge from human perception in this process. We investigate this question from two complementary angles: by examining object recognition under severe visual fragmentation, and by studying the computational principles that support perceptual grouping and segmentation. Together, these approaches aim to identify the mechanisms that allow biological vision to organize sparse signals into structured, meaningful representations.

In one line of work, we use controlled psychophysical paradigms and computational modeling to test how humans and DNNs recognize objects when critical visual information is progressively removed. While human observers remain remarkably robust under such conditions, deep learning models frequently fail, revealing systematic differences in how visual features are integrated. In a complementary project, we explore the hypothesis that neural variability, typically considered noise, may play a constructive role in visual organization. By combining theoretical analysis with neural network modeling and targeted stimulus sets designed to probe grouping principles, we study how segmentation and structured representations can emerge without explicit supervision.

Together, these projects aim to clarify the computational foundations of perceptual organization and to inform the development of more biologically grounded models of vision.

Publications

- Lonnqvist B, Scialom E, Gokce A, Merchant Z, Herzog MH, Schrimpf M (2025, preprint). Contour integration underlies human-like vision. arXiv, 2504.05253.

- Lonnqvist B, Wu Z, Herzog MH (2023, preprint). Latent noise segmentation: How neural noise leads to the emergence of segmentation and grouping. arXiv, 2309.16515.