DOLA – Chair of Dynamics of Learning Algorithms

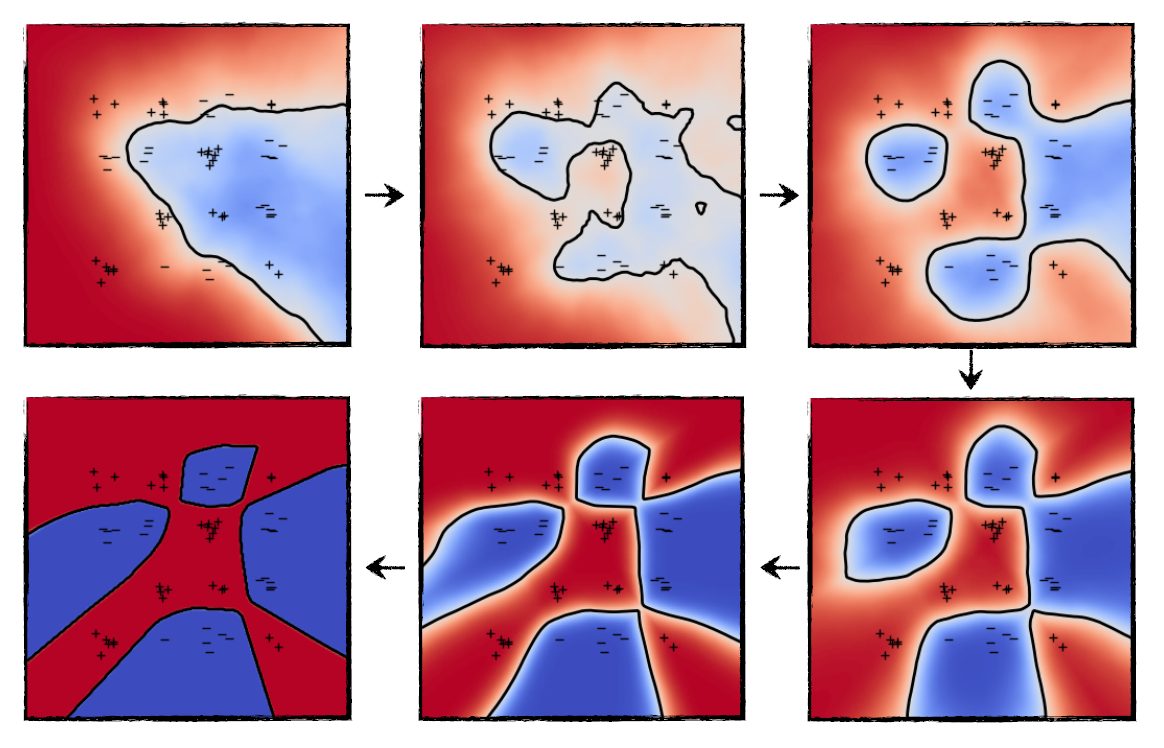

Evolution of a two-layer neural network during training for a two-class classification task. The + and – represent the training set and the color the prediction of the network.

At DOLA, our goal is to understand the mechanisms behind the key algorithms used in machine learning and signal processing. What do they learn? How do they learn and how fast? When do they fail or succeed? How to improve them? To fulfill this objective, we study the optimization, statistical and functional approximation aspects — and their interplay — often in certain asymptotic regimes that facilitate mathematical analysis. Our current interest is on centered on gradient methods for two-layer neural networks, sparse deconvolution and divergences between probability measures (including the Wasserstein distance).

Contact

Prof.

Lénaïc Chizat

Office: MA C2 657

Tel: +41 21 693 69 25

Secretary

Melis Martin

Office: MA B1 483

Tel: +41 21 693 77 31