Full title

IM2.MCA (Multimodal content abstraction)

Project website

Duration of project

From January 2008 to December 2009

Funding source

Swiss National Science Foundation

Description

Automated indexing, retrieval and semantic analysis of multimedia data are interconnected issues, which rely on content modeling and abstraction. It is widely recognized that state of the practice of automated analysis alone cannot extract enough useful information from multimedia documents. Furthermore, multimedia information retrieval has traditionally inherited from classical (textual) information retrieval strategies and the mapping of such techniques onto the more complex context of multimodal data has its limitations. Interactive search and retrieval techniques are thus employed to ensure that the final result of the information processing and management system is truly relevant to the end-user.

IM2.MCA network aims at proposing innovative strategies and techniques for the automatic construction of content-based representations of multimedia data. Content-based models build upon mono- and multi-modal modeling, aims at bridging the gap between such automatically derived content models and desirable query construction terms. Knowledge about both the domain and actual users (along with their interaction) may be also used to relate content-driven models and annotation or meta data terms, allowing efficient human-machine interaction.

MMSPL team is involved in IM2.MCA, working on :

IM2.MCA (A2) Tagged media-aware multimodal content annotation

The approach for multimedia content access based on content analysis has not delivered widely accepted solutions. User activities in social networks, as tagging, annotating and rating of multimedia content, provide an entirely new view on how to solve the multimedia content access problem.

The goal of this project is to find new models of interaction between automatic multimedia content analysis and social tagging. This project takes as successful instances new services and products such as Wikipedia, Flickr, YouTube, MySpace, Second Life, and many others. These services and products are witnesses of the fundamental changes that are taking place in the field of networked media. Our research will aim at either adapting or creating new technological components that are needed to implement and deploy the next generation media networks. The project is also expected to be a key contributor to the JPSearch standardization effort in JPEG.

IM2.MCA (B2) Multimodal quality metrics for multimedia content abstraction

Multimedia services rely on the presence of two main actors: the human subject, who is the end user of the service, and the multimedia content, which is the object of the multimedia communication. In this scenario one of the most relevant features, implicitly taken into account by the end user, is the quality of the multimedia data involved in the application of interest. The user interacts with the multimedia data and she/he very easily judges the quality of its content and, widely speaking, the quality of the multimedia experience which she/he is participating. The “quality” is a particular feature of the multimedia content, since it depends upon the peculiarity of the content itself but it is also strictly related to the subjectivity of the human beings who interact with the content. This is the reason why the user-media interaction can be defined as “multimedia experience”.

Our research in this scenario focuses on the subjective visual, audio, and joint audio and visual, quality assessment modeling and mapping into objective algorithms, i.e. metrics. The goal of our study is to provide objective metrics which allow automatic and real time evaluation of the quality of the multimedia content, highly correlated with the real human perception. In particular, an important part of our research concentrates on the understanding and modeling of the multi-modal perception of quality, in order to design a metric for the assessment of the more general and complex concept of Quality of Experience in a multimedia service.

Research activities

The focus and objectives of the project are organized into three main research themes:

- Multimodal information search and retrieval

- Multimedia document content abstraction

- Multimedia information mining

IM2.MCA – Tagged Media-Aware Multimodal Content Annotation

Over the last few years, social network systems have greatly increased internet users’ involvement in content creation and annotation. These systems are characterized as easy-to-use and interactive. Users contribute with their opinion by annotating content (the so-called tagging), they add tags, comments and recommendations or rate the content.

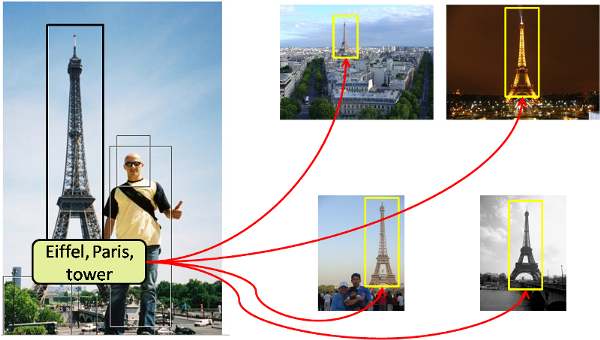

This research project aims at developing a system to enrich images with social network tagging. We plan to exploit object duplicate detection for the extraction of semantic context from an image and use it for efficient image annotation. More specifically, the user provides image which is supposed to be tagged. Object duplicate detection is then performed to find images containing the same object in a large collection of tagged images such as Flickr. Matching tags can then be merged and propagated to new images containing the same object. For instance, a tourist takes a picture of the Eiffel tower with his mobile phone. By querying Flickr, the system can identify a number of tagged images also depicting the Eiffel tower. The matching tags, e.g. “Eiffel tower”, “Paris”, are merged and finally assigned to the image just captured. This also allows determining the context of the picture. This approach offers a compelling new look at how semantic context as well as metadata can be easily generated in order to provide efficient context-aware management and organization of personal images collection.