SOFTWARE

Introduction

This chapter is on the software infrastructure for the long term preservation of audiovisual archives. We will define the main requirements of the software for the management of the digital preservation process, able to implement the different hardwares (servers and tape libraries), and intended to undertake all the normal functions of a digital storage system (ingest and task management, metadata extraction and management, preservation and backup storage). This will also include the architecture of the system, following the OAIS recommendation [3].

Before beginning, it is important to state that backup solutions are out of our scope. There is indeed a common confusion, leading vendors to often put forward solutions that are not only overkill in terms of performance (and thereof in terms of cost), but also inadequate for long term preservation. Following [2], there are several incompatibilities between backup and archive solutions (p. 14):

- A backup is nothing else than the secondary copy of all documents produced by any given business, whatever their retention periods (for example, a backup will save temporary drafts). The aim is to provide fast recovery of these documents in case of disaster or system shut down. So the common features of a backup solution don’t fit the archival requirements, either they are not sufficient, or overkill.

- “Backup systems rarely address media degradation and format obsolescence”. Backups are made continually, and data are continually replaced by later copies, before the media becomes endangered.

- “Backup systems typically do not address long-term information readability”, for the same reason exposed above, and because they don’t preserve the primary copy of information; so permanent access to data is out of the scope of backup systems.

- Finally, since backup systems don’t preserve primary information, authenticity, immutability and security of the information aren’t issued.

Software architecture

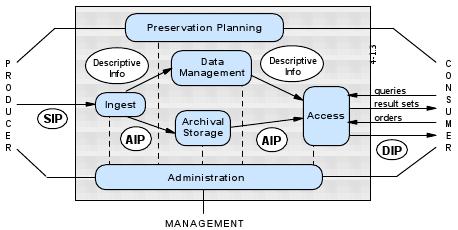

In order to clarify some concepts, it is maybe useful to briefly summarize the OAIS reference model, which is the standard for organizations aimed at the long term preservation of digital material (as well as physical material). However, not all the concepts that it defines are useful for our purpose here.

OAIS – General overview

An OAIS (Open Archival Information System), as it is defined by [3], is “an archive, consisting of an organization of people and systems, that has accepted the responsibility to preserve information and make it available for a Designated Community”. The reference model only provides frameworks, concepts and terminology. The archive is defined as a system of interconnected elements exchanging information in the aim of preserving data – digital data as well as physical objects. So it enumerates “the environment in which an archive resides, the functional components of the archive itself, and the information infrastructure supporting the archive’s processes” [10].

The functional entities group together the main functions of the system from the acquisition of the information to its distribution:

- Ingest (functions dedicated to the reception and the description of information for archival storage).

- Archival Storage (functions related to the long term preservation of the information: storage, maintenance and retrieval).

- Data management (functions related to the administration and the updating of the database).

- Administration (overall operation of the archive system).

- Preservation planning (monitoring the environment of the OAIS and providing recommendations to ensure that the information remains accessible to the Designated User Community over the long term).

- Access (allowing users to reach the information they need).

Each one of those entities has responsibilities to perform and information to give to and receive from other entities.

In order to preserve the information contents, an OAIS [3] “must store significantly more than the contents of the object it is expected to preserve” (p. 4-19). The main informations (data and metadata) that the OAIS model recommends to preserve are contained in three kinds of information packages: Archival (AIP) / Submission (SIP) / Dissemination (DIP) Information Packages. Those Information Packages are made of:

- Information Content

- Content Data Object (the data to be preserved).

- Representation Information (technical metadata).

- Preservation Description Information (PDI):

- Provenance.

- Context.

- Reference.

- Fixity.

- Packaging Information (information which binds, identifies and relates the Content Information to the PDI, what makes the Information Package).

- Descriptive Information (to retrieve and access the package).

The OAIS information model, as it is consistent with a comprehensive, structured view of the archiving system it supports, is able to implement very efficient metadata schemes.

Concretely, the reference model is very helpful to design a system whose goal is to preserve information on the long term; it should bring to a kind of “certification”. It allows to take into account all the necessary issues:

- The different kinds of information to be documented, and a framework to develop metadata.

- The structures to implement to ensure the circulation of information between the entities.

- The action to take in order to preserve information for the long term.

This model, created by the Consultative Committee for Space Data Systems, has been adopted as an ISO standard (ISO 14721:2002). It is now widely accepted in the community and has been developed in several other standards, like:

- Trusted Digital Repositories [14]: this report provides a “framework of attributes and responsibilities for trusted, reliable, sustainable digital repositories capable of handling the range of materials held by large and small research institutions”. So it explains what is to be done at the organizational level to conform with the OAIS model.

- The PREMIS data dictionary [11]: data dictionary of core elements needed to support digital preservation, including implementation details such as repeatability, obligation, and examples. This dictionary, built on the OAIS reference model, is seen as the only existing dictionary for preservation metadata.

OAIS – Functional entities

As already said, the OAIS model describes an organization, not a software architecture. But it defines the functions to complete in order to ensure the proper preservation of digital documents (see chapter 4.1). Most of those functions are “human” tasks and responsibilities (they are developed in [14]); this means that they have to be defined at the organizational level. For example, one has to define the formats accepted for Ingest, or the minimal set of metadata that producers have to provide when submitting information.

However, some of the functions can – and must – be automatized, mostly when speaking of a large amount of data with frequent migrations, for example. From this point of view, it seems useful to review all of the OAIS functional entities, in order to better understand what the software should be able to do.

So in the following subsections, we will briefly summarize all the functional entities (blue boxes in the figure below), and try to detail important features that a software should manage.

(Figure from [3], p. 4-1)

Common services

Common services are the supporting services present in all computing applications. Although those services are “common”, it is necessary to define them here, in the specific scope of long term preservation.

Long term preservation implies that the system, with its software applications and the data it contains, will be able to face future (and unavoidable) change: replacement of obsolescent softwares, hardwares or media, migrations, etc. Moreover, a system suitable for long term preservation has to deal with heterogeneous components (this will be discussed in the next section on practical requirements). To guarantee these abilities, interoperability and portability of common services must be ensured, by the use of open standards and specifications, and this in three categories of services [7]:

- The operating system services: they are the core services needed to run the application software and manage the resources of the application software.

- The network services: they are central services, since they ensure the transparent communication within an heterogeneous system integrating different components from different vendors. Building heterogeneous systems has several advantages: it allows to use components (for the different functional entities) from different vendors, allowing the replacement of one component instead of the whole system when obsolescence occurs; moreover, it prevents vendor lock-in and decreases the risks related to one single provider (monoculture). More details on this topic in the next section on practical requirements.

- The security services, which provide the mean to protect information, through mechanisms of identification, authentication, data integrity (the purpose is to guarantee that data is not damaged by an unauthorized person), confidentiality, etc.

Any software solution should conform to those principles of interoperability and portability to be adequate for long term preservation. For that purpose, the IEEE POSIX OSE [7] model seems to be a good reference when designing a system, and should be used (or another similar OSE model) as a standard.

Ingest

There should be a function of the software able to manage the ingest of new data. This means allocating a temporary storage space, just during some preliminary tasks: processing a quality insurance through Cyclic Redundancy Checks (CRC), generating an AIP by extracting the metadata and adding some others, and by formatting the data to the selected format. Then this function generates descriptive information to transmit to the database; if content-based indexation is automatically processed, it will be during this phase. Finally, the AIP is transferred electronically to the archival storage entity (the tape library).

Two options are possible: if the digitization is made by a third-party service (Sony preservation factory, e.g.), all of these tasks will be made by this service, including the transfer to data tapes; a contrario, if the digitization is made on the premises, we have to set up (as well as digitization tools) disk space, encoders, and indexation tools.

We will discuss in more details the problem of metadata extraction and indexation in the chapter dedicated to metadata.

Archival Storage

Following [3], “Archival Storage functions include receiving AIPs from Ingest and adding them to permanent storage, managing the storage hierarchy, refreshing media on which archive holdings are stored, performing routine and special error checking, providing disaster recovery capabilities, and providing AIPs to Access to fulfill orders” (p. 4-1).

Some of these functions are relative to the transfer of information packages from one storage device to the other, for example from the data tapes to the secondary archives for access, and some other to the long term preservation issue themselves (migration, authenticity, etc.). Following [3] and [9], the system should be able to automatically:

- Handle the reliable transport of data. It means using interoperable softwares and a HSM (Hierarchical Storage Manager), which ensures the transfer of information from one tier to another, following needs and use, as defined by the policy (allowing to save space, to improve access times and to decrease storage costs).

- Allocate unique persistent (vendor independent) identifiers to objects, and to bind them permanently to their object.

- Process migration (or, more precisely, refreshing), in order to avoid hardware or format obsolescence, replacing media without loss.

- Process error checking through random verification of the integrity of data (using Cycling Redundancy Checking, for example).

- Guarantee the authenticity of objects (through encryption or access controls).

- Alert the administrator of problems with the content, environment or storage hardware early enough for the administrator to take action and if necessary shut down and protect assets in the event of a crisis [4].

- Ensure failure and disaster recovery, for example through mirroring of the whole repository (see next section on practical requirements).

Data Management

This functional entity refers to the database management software, and includes all usual tasks managed by common databases, like generating reports, performing queries, etc. It doesn’t include only descriptive information (or “bibliographic” metadata to search the catalog and retrieve assets), but also system information. The goal of these functions is to provide “organized access” [4] to the data.

We will discuss the problem of managing metadata (and in particular the definition of the database’s schema and tables) in the chapter dedicated to metadata.

Administration and Preservation planing

These functions are mainly relative to organizational level: establishing standards, policies and strategies, controlling physical access, technology watch, etc. So we won’t discuss them in this document.

Access

The software managing the Access entity consists mainly in a user interface allowing the search and retrieval of digital assets; but it is also necessary to generate DIP at the adequate format (maybe more compact than the AIP), and transferring to a temporary storage space for rapid access.

It is also important that the access entity manages the access rights, in order to guarantee confidentiality and integrity of data by ensuring that nobody – by mistake or abuse – will modify or destroy original data.

Conclusion

The OAIS reference model allows us to design a software architecture including all the entities above, and allowing all of these functions necessary for long term preservation.

Moreover, it is preferable to use components with high integrability, portability and interoperability capabilities, so open standards and specifications.

In the next section, we will see what are the practical requirements of such an architecture, or what are the practical issues the system has to address.

Practical requirements

We saw what are the components of an archive, what they should be able to do, and how they have to interact. Now we will see what are the requirements of an archive, or what measures should be taken in order to guarantee long term preservation in a digital environment. So here we will only discuss preservation issues (permanent access), and not performance (fast access). These requirements should be part of a cahier des charges.

If we are looking to preserve the integrity of Montreux Jazz Festival audiovisual heritage for generations, and even ad vitam eternam, the common longevity claimed by computer material vendors cannot provide sufficient confidence. Computer vendors, when they speak about “long term” reliability, usually don’t think much longer than five years. There are several reasons to this fact, related to technological and economical constraints; but the important thing is that digital curators have no alternative but dealing with it: there is no mean, for the time being, to find a definitive method allowing to access information in an undefined period of time, like paper was. The conclusion is that “the approach for digital preservation then, is not to build permanent systems, but rather to construct systems which will facilitate the management and preservation of the data content in the face of change” [1]. Bradley advocates for open standards and specifications, facilitating this change and reducing the costs of migrations. But there are other important requirements.

To reach the goal of permanent access to digital information, it is necessary to first identify the threats. [13] makes a typology of the different risks, including technological risks (media, hardware, or software failures and obsolescence), natural risks (natural disasters, like fire), and human and organizational risks (operator error, abuse, bankruptcy, attacks, etc.). Once these risks are defined for each particular situation, the adequate strategies can be adopted, from the set defined by [13]: replication, migration, transparency, diversity, audit, economy and sloth. Going in details into these strategies is out of this document’s scope, since they include, for example, the choice of the appropriate media, the migration policies, the whole archive’s economical viability, etc.

However, [13] gives three key properties that allow anticipating the risks: if the system (and the softwares) complies with these properties, threats are less likely to happen. For example, the cost of the system (not only the purchasing cost, but also the maintaining cost) is a risk. If it is too high, funds could be unavailable in the future to maintain the archive. Using open standards may reduce the cost of purchasing, but also when migrating from one system to a new one (see below: diversity).

Here we will see the system’s requirements for each of the key properties: reliability, diversity, and error checking and monitoring.

Reliability

“At minimum, the system must have no single point of failure; it must tolerate the failure of any individual component. In general, systems should be designed to tolerate more than one simultaneous failure” [13].

Reliability of the system can be ensured by the replication of the data (mirroring) on remote sites, which decreases the probability of data loss. In disk arrays, the use of RAIDs or MAIDs systems in order to duplicate data is also a way reducing the failure risk – for all that we look for speed recovery, which is not the case for the primary archive.

Another possible way is to use the most reliable hardware. We already discussed the choice of carriers in chapter on hardware; what is important here is to remember to take into account the reliability tests of the manufacturer, and to consider values of MTBF (Mean Time Before Failure), AFR (Annual Failure Rate), number of loads/unloads, etc. Another important criteria is the “sloth”: simple products, with less components, not at the leading edge of technology and performance will last longer and will be less prone to failure and errors. For example, SATA drives are well suited for preservation, because of their low spinning speed. As well, the more a system needs cooling, environment controlling and power, the more it will be prone to failures – and to cessation of funding; in this sense, MAIDs are well suited for long term preservation.

Finally, using carriers from different lots and from different manufacturers decreases the probability of total loss due to manufacturing defects.

The reliability of the system allows guaranteeing the integrity of the data, and also reducing the costs of maintaining the system. So one one should prefer simple, long time tested and robust components over last trend technologies, even if the performances of the latter are better.

Diversity

“Media, software and hardware must flow through the system over time as they fail or become obsolete, and are replaced. The system must support diversity among its components to avoid monoculture vulnerabilities, to allow for incremental replacement, and to avoid vendor lock-in” [13].

For example, [15] says that “The benefits of a standardized data tape format [like LTO] are lost when the systems that control the data tape drives write custom, library-specific data to the tapes. This locks the facility into a single vendor’s storage library product, and make interchange impossible with customers and other facilities that may use another vendor’s library system” (p. 33), which is also true in case of migration. [9] also advocates for the use of vendor independent, unique and persistent identifiers.

In general, integrating components involves the use of technologies able of interoperability and portability, using open specifications and standards (see above the sub-section on “Common services”). Since every layer of an heterogeneous preservation system has its own obsolescence cycle, using open standards allows this “incremental replacement”.

Error checking and monitoring

“Most data items in an archive are accessed infrequently. A system that detected errors and failures only upon user access would be vulnerable to an accumulation of latent errors. The system must provide for regular audits at intervals frequent enough to keep the probability of failure at acceptable levels” [13].

These audit activities must be carried out on the content, the hardware and the software, also including the storage environment (fire alarm, temperature, humidity), and by using systematic and automatic processes: CRC, alerts, etc.

Conclusion

We mentioned the costs only implicitly; of course, the choice of any component of a digital preservation system should integrate this factor. But another point important to mention is the fact that the economical viability of the archive is also based on the dissemination of the data it contains; this means making the preservation profitable, which ensures a relative independence to the archive.

The scope here is not to develop strategies on how to make profit on the Montreux Jazz Festival audiovisual capital, but just to mention systems considering data as “assets” that one has to retrieve and disseminate easily: Digital Asset Management systems (DAM), or more specifically Multimedia Asset Management systems (MAM).

At the minimum, a DAM can be a simple file manager; but it can also integrate lots of specialized modules allowing workflow management, indexing and cataloging, searching and retrieval, and dissemination, including Digital Rights Management (DRM). Of course, the storage management is also included, with functionalities like HSM or ILM (Information Lifecycle Management). This kind of systems is not only used in broadcasting industries [8] [6], but also in preservation contexts, where profitability is also an issue [5].

The system architecture we preconize here, with the necessary components fitting the requirements, takes actually the form of a DAM. The only difference is that the workflow itself will not be as difficult to manage as in broadcasting, where the system has to ingest, process and disseminate large amount of data at every moment. Designing a digital repository doesn’t only involve choosing the right softwares, formats and hardwares, but also beeing able to integrate them. So software vendors should not only provide their own material, but also act as “system integrators” [12], providing core components around which they would build a system made from other vendors’ components.

Bibliography

All references accessed on October 16th, 2008

General references

ASSOCIATION OF MOVING IMAGE ARCHIVISTS. AMIA-L [online]. Hollywood: AMIA. http://lsv.uky.edu/archives/amia-l.html

EPFL. Montreux Jazz Festival Digital Archive Project: A unique and first of a kind high resolution digital archive of the Montreux Jazz Festival. October 2008

GOUYET, Jean-Noël. GERVAIS, Jean-François. Gestion des médias numériques: digital media asset management. Paris: Dunod, 2006. 328 p.

HATII, NINCH. The NINCH Guide to Good Practice in the Digital Representation and Management of Cultural Heritage Materials [online]. 2002. http://www.nyu.edu/its/humanities/ninchguide/index.html

Bibliography

[1] BRADLEY, Kevin. LEI, Junran. BLACKALL, Chris. Towards an Open Source Repository and Preservation System: Recommendations on the implementation of an Open Source Digital Archival and Preservation System and on Related Software Development [online] Paris: Unesco, 2007. 34 p. http://portal.unesco.org/ci/en/ev.php-URL_ID=24700&URL_DO=DO_TOPIC&URL_SECTION=201.html

[2] BYCAST. White Paper: Best Practices for Long-term Data Storage and Preservation. 2008

[3] CONSULTATION COMMITTEE FOR SPACE DATA SYSTEMS (CCSDS). Reference Model for and Open Archival Information System (OAIS) [online]. Washington, DC: CCSDS, 2002. 148 p. http://public.ccsds.org/publications/archive/650x0b1.pdf

[4] COUGHLIN, THOMAS M. Archiving in the Entertainment and Professional Media Market [online]. Coughlin Associates: 2008. 17 p. http://www.tomcoughlin.com/Techpapers/Archiving%20in%20the%20Entertainment%20and%20Media%20Market%20%20Report,%20final,%20021808.pdf

[5] DIGICULT. Digital Asset Management Systems for the Cultural and Scientific Heritage Sector. In: Digicult [online]. Thematic Issue 2, December 2002. 43 p. www.digicult.info/downloads/thematic_issue_2_021204_low_resolution.pdf

[6] DICKSON, Glen. The NBA’s Living, Breathing Archive: Referee assessments among uses for NBA’s digital library. In: Broadcasting and Cable [online]. June 16th 2008. http://www.broadcastingcable.com/article/CA6570398.html?&display=Features&referral=SUPP&

[7] INSTITUTE OF ELECTRICAL AND ELECTRONICS ENGINEERS (IEEE). IEEE Guide to the POSIX Open System Environment (OSE) [online]. New York, IEEE, 1995. 206 p. http://ieeexplore.ieee.org/stamp/stamp.jsp?arnumber=00552903

[8] JOHNSTON, Craig. Fox News Goes Tapeless: Network’s News and Business Channels Install End-to-End Digital Workflow. In: TV Technology.com [online]. June 25th 2008. http://www.tvtechnology.com/pages/s.0082/t.14187.html

[9] LINDEN, Jim. MARTIN, Sean. MASTERS, Richard. PARKER, Roderick. Technology Watch Report: The large-scale archival of digital objects [online]. London: British Library, 2005 (DPC Technology Watch Series Report 04-03). 20 p. http://www.dpconline.org/docs/dpctw04-03.pdf

[10] OCLC/RLG WORKING GROUP ON PRESERVATION METADATA. Preservation Metadata and the OAIS Information Model: A Metadata Framework to Support the Preservation of Digital Objects [online]. Dublin, Ohio: OCLC, 2002. 54 p. http://www.oclc.org/research/projects/pmwg/pm_framework.pdf

[11] PREMIS EDITORIAL COMMITTEE. PREMIS Data Dictionary for Preservation Metadata version 2.0 [online]. Washington, DC: Library of Congress: 2008. 224 p. http://www.loc.gov/standards/premis/v2/premis-2-0.pdf

[12] PRESTOSPACE. Preservation towards storage and access: Standardised Practices for Audiovisual Contents in Europe. In: Prestospace [online]. Last updated 19.12.2007. http://www.prestospace.org/index.en.html

[13] ROSENTHAL, David S. H. ROBERTSON, Thomas. LIPKIS, Tom. REICH, Vicky. MORABITO, Seth. Requirements for Digital Preservation Systems: A Bottom-Up Approach. In: D-Lib Magazine [online]. Volume 11 Number 11, November 2005. http://www.dlib.org/dlib/november05/rosenthal/11rosenthal.html

[14] RLG. Trusted Digital Repositories: Attributes and Responsibilities: An RLG-OCLC Report [online]. Mountain View: RLG, 2002. 70 p. http://www.oclc.org/programs/ourwork/past/trustedrep/repositories.pdf

[15] SCIENCE AND TECHNOLOGY COUNCIL OF THE ACADEMY OF MOTION PICTURE ARTS AND SCIENCES. The Digital Dilemma: Strategic Issues in Archiving and Accessing Digital Motion Picture Materials. 2008